Independent data collected from developer surveys, open-source repository telemetry, and workforce research shows that AI-assisted coding tools are now widely used among professional software developers across most major economies.

However, adoption is uneven by country and far more complex than headline statistics suggest. While trial rates approach saturation in some regions, depth of integration, trust, and productivity outcomes vary significantly.

This article presents a consolidated, evidence-driven analysis of AI coding tools adoption statistics by country, covering 2024–2025 with a conservative outlook for 2026.

3. Defining AI coding tools (scope)

This article focuses on AI-assisted coding tools used by professional developers, including:

- IDE-integrated code completion and suggestion systems

- Natural-language-to-code assistants

- Refactoring, testing, and documentation assistants

Excluded from scope:

- Student-only usage

- Low-code/no-code platforms

- General-purpose chatbots used outside coding workflows

4. Global baseline: adoption among professional developers

Across multiple independent datasets in 2024–2025:

- 76–85% of professional developers report using AI coding tools

- ~50% report daily usage

- 15–20% report no use or active avoidance

This indicates widespread exposure but uneven operational reliance.

5. Trial vs sustained usage

A critical distinction often missed:

- “Have you ever used an AI coding tool?” → near saturation

- “Do you rely on AI tools daily?” → roughly half

This gap explains many contradictions in productivity claims.

AI coding tools adoption statistics by country

Country Comparison Chart of Professional Developers using AI coding tools

| Country | Developer Trial Rate* | Estimated Daily Usage | AI-Assisted Share of New Code** | Enterprise Allowance Level | Key Constraint |

|---|---|---|---|---|---|

| United States | ~99% | ~55% | ~29% | High | Security & code quality |

| India | ~99% | ~50% | ~20% | Medium–High | Review overhead |

| Germany | ~97% | ~45% | ~23% | Medium | Regulation & IP risk |

| France | n/a | ~45–50% | ~24% | Medium | Enterprise governance |

| United Kingdom | ~95–98% | ~50% | ~25% (est.) | High | Trust & compliance |

| Brazil | ~99% | ~45–50% | n/a | Medium | Infrastructure variance |

| China | n/a | ~30–35% | ~12% | Medium | Model access & policy |

| Japan | ~90–95% | ~40% | ~18% (est.) | Medium | Language/model fit |

| South Korea | ~90–95% | ~45% | ~20% (est.) | Medium | Enterprise caution |

| Global Average | ~76–85% | ~50% | n/a | Mixed | Trust & security |

Footnotes

* Developer Trial Rate

Percentage of professional developers who report having used an AI coding tool at least once.

Derived from multi-country independent developer surveys (Stack Overflow, JetBrains).

** AI-Assisted Share of New Code

Percentage of newly committed public-source code inferred to be AI-generated or AI-assisted.

Derived from peer-reviewed open-source repository telemetry (Python repositories).

Why survey and telemetry differ

Surveys measure self-reported behavior.

Telemetry measures observable output.

Countries often show high survey usage but lower code-share integration.

Regional patterns and drivers

United States

The US leads on most adoption metrics.

Key drivers:

- Early access to AI coding tools

- High cloud and IDE penetration

- Strong enterprise permissiveness

Nearly one-third of new public Python code shows AI assistance.

India

India shows near-universal trial adoption.

Drivers include:

- Large, young developer workforce

- Strong outsourcing and export incentives

- Rapid uptake of productivity and automation tools

However, deeper integration still lags the US slightly.

Western Europe (Germany, France)

Adoption is strong but cautious.

Characteristics:

- High individual usage

- Lower enterprise encouragement

- Strong regulatory and compliance concerns

Germany illustrates the “high use, low trust” pattern.

China

China lags on observable AI code share.

Key constraints:

- Restricted access to Western LLMs

- Regulatory scrutiny of AI outputs

- Reliance on emerging domestic models

Adoption is growing, but uneven.

Usage intensity and workflow integration

Daily vs occasional users

Independent surveys show:

- ~50% daily users

- ~25% weekly or occasional

- ~25% minimal or no use

This distribution is consistent across countries.

What developers actually use AI for

Most common use cases:

- Boilerplate code generation

- Syntax completion

- Documentation and comments

- Language translation

Less common:

Productivity effects: measured, not claimed

Aggregate productivity impact

Independent academic analysis estimates:

- ~3–5% average productivity gain

- Gains concentrated in:

- Experienced developers

- Well-structured codebases

This is meaningful but not transformative.

Experience-level paradox

Observed pattern:

- Junior developers use AI more

- Senior developers benefit more

This creates uneven productivity distribution within teams.

Negatives and failure modes

Security vulnerabilities

Independent audits consistently find:

- ~45% of AI-generated code contains security flaws

- Frequent issues include:

- SQL injection

- Unsafe deserialization

- Weak cryptography

Manual code review remains essential.

Toolchain attack surfaces

Recent research shows:

- Prompt injection can compromise IDEs

- AI agents may access unintended files

- Malicious dependencies can exploit AI behavior

These risks scale with deeper integration.

Code quality issues

Common failures:

- Incorrect logic with valid syntax

- Hallucinated libraries or APIs

- Misaligned architectural patterns

Developers report time lost validating AI output.

Trust erosion

Survey trends show:

- Declining trust year-over-year

- Fewer than half of developers fully trust AI output

- Increased verification overhead

Adoption outpaces confidence.

Skill atrophy concerns

Developers report concerns about:

- Reduced problem-solving practice

- Shallow understanding of generated code

- Over-dependence on suggestions and summaries

Long-term effects remain unquantified.

Enterprise vs individual adoption gap

Individual behavior

Most developers experiment freely.

They adopt tools opportunistically.

Enterprise governance

Organizations are more cautious.

Barriers include:

- IP leakage concerns

- Compliance requirements

- Auditability of AI outputs

This gap creates shadow usage.

What most articles miss

Adoption is multi-stage

Stages include:

- Trial and experimentation

- Regular individual use

- Developer workflow integration

- Enterprise standardization

Most countries are between stages 2 and 3.

Code share matters more than surveys

Telemetry reveals:

- Real usage intensity

- Structural differences missed by polling

Future research should prioritize output-based metrics.

Local ecosystems distort global comparisons

Non-English and domestic models:

- Change adoption dynamics

- Are underrepresented in Western datasets

Country comparisons must account for this bias.

Adoption ≠ impact

High usage does not guarantee:

- Higher quality

- Faster delivery

- Better security

Outcomes depend on governance and skill.

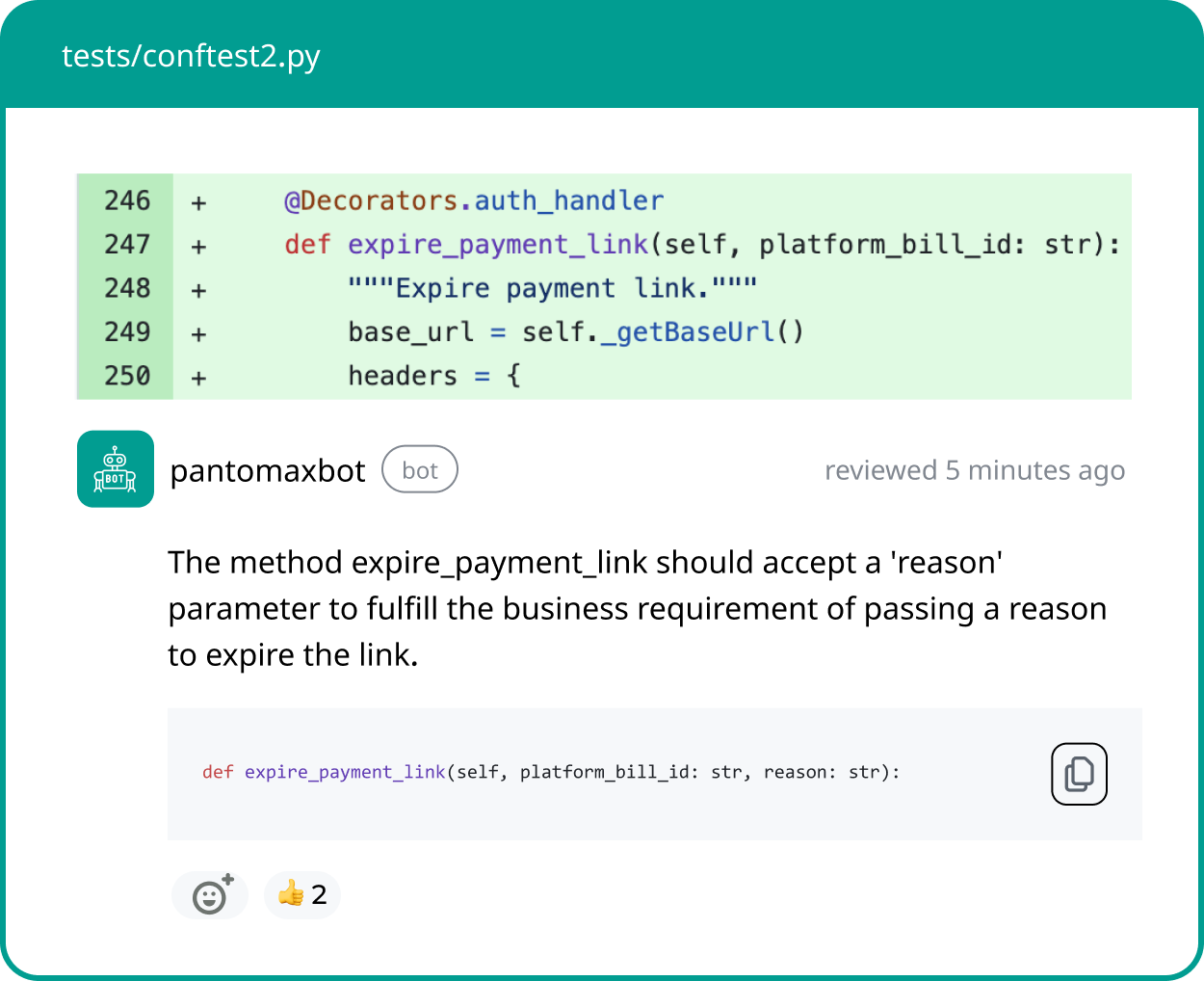

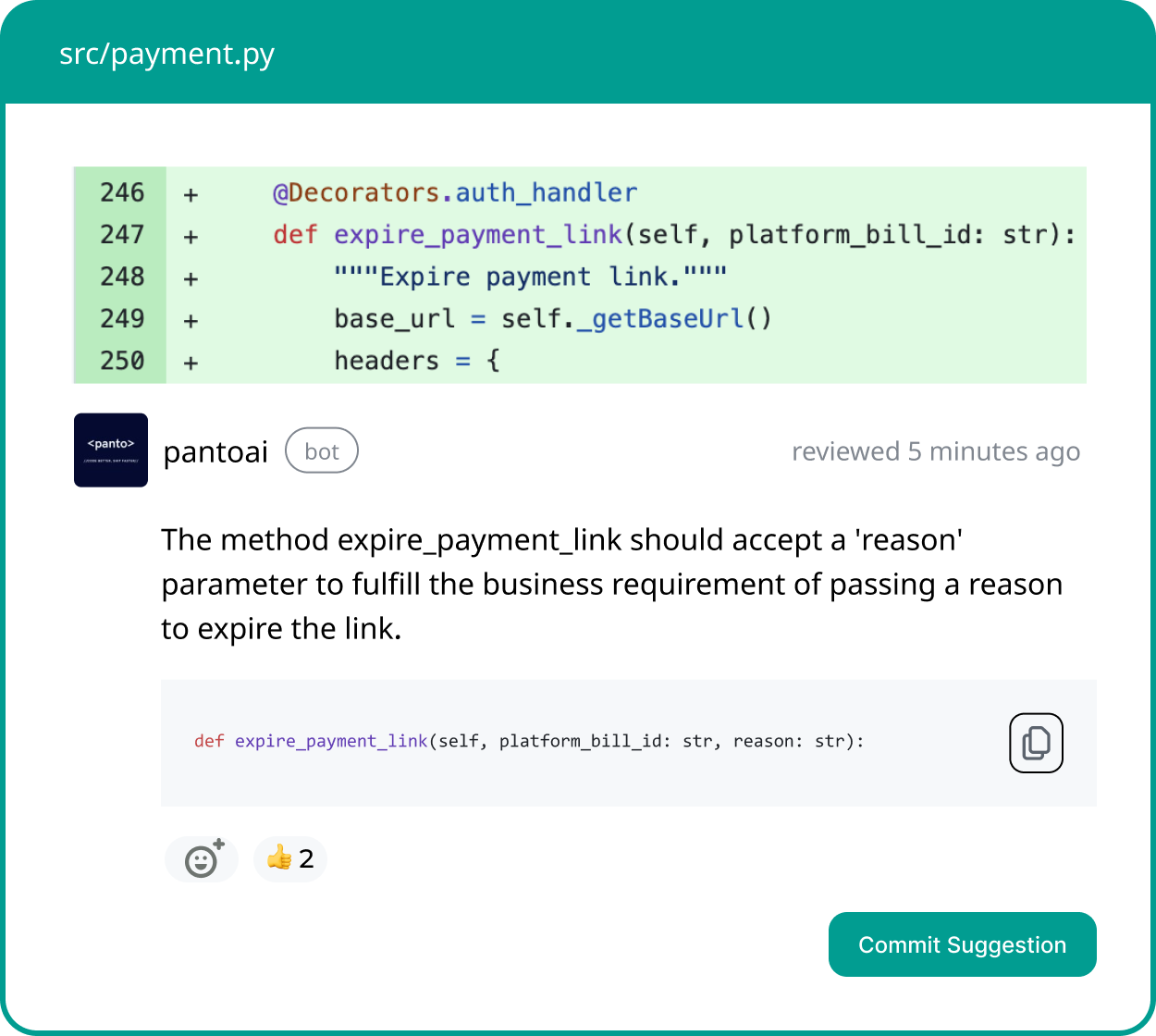

Your AI Code Review Agent

Panto reviews every pull request with business context, architectural awareness, and consistent standards—so teams ship faster without hidden risk.

- ✓ Aligns business intent with code changes

- ✓ Catches bugs and risk in minutes, not days

- ✓ Hallucination-free, consistent reviews on every commit

2026 outlook (conservative)

Expected trends

- Trial adoption plateaus globally

- Enterprise integration increases gradually

- Security tooling becomes mandatory

No evidence supports exponential gains.

Country-level outlook

- US: marginal growth, deeper integration

- India: continued growth, stronger governance

- Europe: cautious expansion with regulation

- China: moderate catch-up via domestic models

Gaps narrow slowly.

What will not happen

Unlikely by 2026:

- Massive developer displacement

- Autonomous software engineering

- Order-of-magnitude productivity jumps

Evidence does not support these narratives.

Conclusion

According to an independent analysis of AI coding tools adoption statistics, professional developers across countries have largely embraced AI-assisted coding—but not without reservation.

Trial adoption is near saturation, yet trust, security, and integration maturity lag behind usage. Country differences are driven less by technical capability and more by regulation, enterprise policy, and developer demographics.

The defining question for 2026 is no longer whether developers use AI coding tools, but how safely, deeply, and effectively they are embedded into real-world software workflows.

FAQ’s

Q: Which country is leading in AI adoption?

A: Leadership depends on the metric used. In terms of developer-level AI coding tool trial and daily usage, the United States currently leads, with near-saturation trial rates and the highest observable AI-assisted code share in public repositories. However, countries like India show comparable trial rates with slightly lower integration depth. When measured by enterprise integration and regulatory clarity, the ranking shifts. No single country leads across all dimensions—usage intensity, enterprise governance, and regulatory maturity vary significantly.

Q: Which country uses the most artificial intelligence?

A: The answer depends on whether “use” refers to consumer AI, enterprise AI systems, or AI-assisted software development. In professional software development, the United States shows the highest observable AI-assisted contribution to new public code. India demonstrates comparable individual experimentation levels but slightly lower sustained daily reliance. China’s adoption is growing but influenced by domestic model availability and policy constraints. Usage leadership is therefore domain-specific rather than absolute.

Q: What is the adoption rate of AI tools?

A: Across professional developers globally, 76–85% report having used AI coding tools at least once. Daily usage averages around 50%, with another 20–30% using them weekly or occasionally. Enterprise-wide standardization remains lower than individual experimentation. Trial adoption is near saturation in many major economies, but deep workflow integration is still maturing. Adoption rates plateaued in 2024–2025 and are expected to grow gradually rather than exponentially.

Q: What percentage of developers use AI tools?

A: Approximately three-quarters to four-fifths of professional developers report using AI coding tools in some capacity. Around half report daily usage integrated into their workflow. A smaller segment—roughly 15–20%—actively avoids or minimally uses such tools. Usage is higher for boilerplate generation and documentation tasks than for architecture or security-critical logic. Frequency and depth of reliance vary more than headline trial percentages suggest.

Q: Is AI adoption higher in the US than in Europe?

A: In software development contexts, the US demonstrates slightly higher daily usage and a greater share of AI-assisted public code contributions. European countries such as Germany and France show strong individual adoption but more cautious enterprise policies due to regulatory and compliance considerations. This creates a gap between experimentation and organizational standardization. Adoption is therefore comparable at the individual level but more conservative at the governance level in parts of Europe.

Q: Are AI coding tools widely adopted in India?

A: Yes. India shows near-universal trial exposure among professional developers, driven by a large and rapidly expanding technical workforce. Daily usage levels are high but slightly below US averages in measurable integration depth. Review overhead and enterprise governance constraints affect sustained reliance. Adoption is strong and growing, particularly in outsourcing and product engineering environments.

Q: Does high AI adoption mean higher productivity?

A: Not necessarily. Independent research suggests average productivity gains of roughly 3–5%, concentrated among experienced developers and structured codebases. High trial usage does not automatically translate to measurable delivery acceleration. Verification overhead and security review requirements can offset gains in some contexts. Adoption and impact are related but not equivalent.

Q: How much of new code is AI-generated or AI-assisted?

A: Telemetry-based studies of public repositories estimate that roughly 20–30% of newly committed code in some ecosystems shows signs of AI assistance, with variation by country and language. The United States trends toward the higher end of that range. This metric is lower than self-reported usage because it measures observable output rather than tool interaction. AI assistance is more prevalent in boilerplate and repetitive segments than in complex architectural components.

Q: Is AI adoption among developers still increasing?

A: Growth is slowing compared to the initial surge following large-model releases. Trial rates are near saturation in major markets, so incremental growth now comes from deeper integration rather than first-time use. Enterprise governance frameworks are evolving, which may gradually increase standardized usage. Significant acceleration beyond current levels is unlikely without major capability breakthroughs.

Q: Why do adoption statistics vary between surveys and repository data?

A: Surveys capture self-reported behavior, including experimentation and occasional use. Repository telemetry measures observable AI-influenced output, which reflects sustained integration. Developers may use AI frequently without every interaction resulting in committed code. This explains why reported usage rates often exceed measurable AI-assisted code share. The two datasets measure different layers of adoption maturity.