AI coding assistants are now mainstream developer tools. By 2025, a majority of professional developers use AI in their daily work, and controlled experiments show large task-level speedups. Yet organizational results are inconsistent.

Many teams report faster coding but little improvement in delivery velocity or business outcomes.

This article synthesizes independent surveys, controlled experiments, enterprise analytics, and security research to answer a core question:

Does AI coding actually improve productivity at the organizational level, or does it only make individual developers feel faster?

Definition: AI Coding Productivity

AI coding productivity refers to the measurable impact of AI assisted development tools on software delivery.

It includes task completion speed, code quality, review effort, security outcomes, and end-to-end delivery metrics such as lead time and change failure rate.

Critically, productivity is not synonymous with typing speed or lines of code. True productivity is the rate at which high-quality software creates business value.

Adoption in 2025: AI Is No Longer Optional

AI coding tools moved from novelty to default.

- Approximately 84 percent of developers report using or planning to use AI tools that assist in code review or code generation.

- 51 percent of professional developers use them daily.

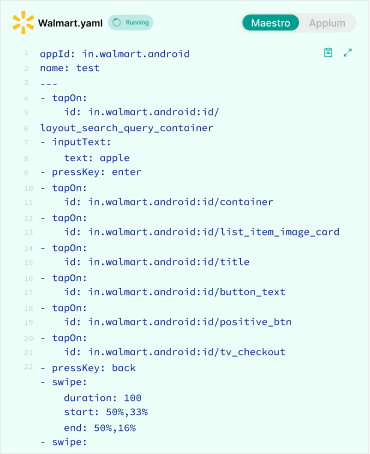

- Adoption is highest for frontend, scripting, and test generation tasks.

Usage intensity varies, but the direction is clear: AI assistance is now part of the standard developer workflow.

Key takeaway: Adoption is no longer the differentiator. Outcomes are.

What Controlled Experiments Actually Prove

Controlled lab experiments provide the cleanest causal evidence.

One widely cited experiment found developers completed a representative coding task 55 percent faster with AI assistance. Similar experiments replicate large speedups for scoped tasks.

Important constraints:

- Tasks are short and well defined

- Integration, review, and deployment are excluded

- Results measure task speed, not system throughput

Conclusion: AI reliably reduces low-level friction such as syntax recall, boilerplate generation, and API lookup.

Field Studies: Why Organizational Gains Are Inconsistent

When AI tools are deployed across real teams, results diverge.

Enterprise analytics and randomized trials show:

- Some teams improve throughput

- Many teams see negligible change

- A subset experiences quality regressions that offset speed gains

Why This Happens

- Measurement mismatch

Teams track perceived speed instead of delivery metrics. - Bottleneck migration

Faster coding shifts load to reviews, QA testing, and integration. - Rework costs

AI generated code can introduce subtle defects that increase downstream work.

Security and Quality Tradeoffs

Independent security research highlights real risks:

- AI summaries and suggestions may reproduce insecure patterns

- Validation logic and error handling are often incomplete

- Code is committed faster than security review capacity grows

Net effect: verification burden increases until tooling and governance mature.

Key takeaway: AI accelerates both value creation and risk creation.

Perception vs Reality: The Productivity Paradox

Surveys show developers feel more productive and satisfied when using AI tools. However, organizational metrics often lag.

This perception gap matters:

- Leaders may overinvest based on sentiment

- Teams may optimize for speed instead of outcomes

Without instrumentation, perceived productivity becomes a misleading signal.

The Metrics That Actually Determine AI Coding ROI

Stop using raw output metrics. Use a balanced system.

| Metric Category | Recommended Measures | Why It Matters |

| Flow | Lead time, deployment frequency | Captures delivery speed |

| Quality | Post-release defects, security findings | Measures hidden cost |

| Review | PR size, review time | Shows reviewer load |

| Experience | Time in flow, blocker resolution | Indicates sustainability |

| Business | Time to market, revenue impact | Ties work to value |

Key takeaway: AI improves productivity only when these metrics move together.

Comparison: Individual Speed vs Organizational Throughput

| Dimension | Individual Productivity | Organizational Productivity |

| Primary driver | Coding speed | Flow efficiency |

| AI impact | High | Conditional |

| Failure mode | Overconfidence | Bottleneck amplification |

| Success factor | Task assistance | Process redesign |

Governance Practices That Capture Net Value

Organizations that realize gains implement controls:

- Automated testing gates with higher assertion coverage

- Security and secret scans tuned for AI failure patterns

- PR size caps and paired reviews for AI heavy changes

- Prompt templates favoring minimal, secure outputs

- Training on AI failure modes

- Incentives aligned to code quality, not output volume

Role-Specific Effects

- Junior developers gain the most speed but require oversight

- Mid-level developers benefit in integration and debugging

- Senior engineers gain indirectly through leverage and review efficiency

This shift changes role expectations and hiring signals.

Forecast to 2026

Evidence-based expectations:

- Tool quality improves, reducing verification overhead

- Measurement maturity becomes the differentiator

- Organizations reward judgment and systems thinking over raw output

Risk remains for teams that adopt tools without governance.

Key Takeaways

- AI coding tools increase individual speed reliably

- Organizational gains require process and metric changes

- Security and code quality costs are real and measurable

- Measurement, not adoption, determines ROI

Conclusion

AI coding assistants are now standard tools. Controlled experiments prove task-level speedups. Field evidence shows that without changes in measurement and governance, those gains rarely translate into business outcomes.

Organizations that instrument delivery metrics, strengthen review and security practices, and retrain teams will convert AI driven speed into durable productivity in 2026.

Suggested Next Step

Before expanding AI usage, baseline delivery and quality metrics for one quarter, then pilot AI tools with explicit governance and review constraints.

FAQ’s

Q: Does AI actually boost developer productivity?

Yes — at the task level. Controlled experiments consistently show significant speed improvements (often 30–55%) for scoped programming tasks such as writing functions, generating tests, or producing boilerplate. However, organizational productivity improves only when process bottlenecks (review, QA, security, integration) are also addressed.

Q: Why do developers feel more productive with AI even when company metrics don’t improve?

AI reduces cognitive friction (syntax recall, documentation lookup, scaffolding), which creates a strong perception of speed. But delivery metrics such as lead time, defect rate, and deployment frequency often remain unchanged because bottlenecks migrate downstream to review and validation stages.

Q: What metrics should teams track to measure AI coding ROI?

Avoid lines of code or commit volume. Instead track lead time for changes, deployment frequency, post-release defect rate, security findings, pull request size and review time, and change failure rate. AI creates ROI only if speed and quality metrics improve together.

Q: Do AI coding assistants reduce code quality?

Not inherently — but they can increase verification burden. Independent research shows AI-generated code may reproduce insecure patterns, omit edge-case validation, or generate verbose but shallow logic. Without stronger review gates, quality regressions can offset speed gains.

Q: Which developers benefit most from AI coding tools?

Junior developers typically see the largest speed gains but require the highest supervision. Mid-level developers gain strong productivity improvements in integration and debugging. Senior engineers benefit indirectly through leverage, architectural focus, and review acceleration. The net benefit depends heavily on governance maturity.

Q: Why don’t AI speed gains automatically improve delivery velocity?

Because of bottleneck migration. When coding accelerates, pull request volume increases, review queues grow, QA becomes saturated, and security validation lags. Throughput only increases when the entire delivery system adapts.

Q: Is AI coding adoption universal in 2025?

Adoption is mainstream. Surveys indicate the majority of professional developers use AI tools daily or plan to integrate them. However, adoption alone does not predict improved outcomes — measurement and governance determine impact.

Q: Does AI coding increase security risk?

Potentially, yes. Common risk patterns include hardcoded secrets, incomplete authentication checks, copying insecure public code patterns, and missing input validation. Organizations must upgrade automated scanning and review processes accordingly.

Q: How can organizations safely expand AI coding usage?

Best-practice governance includes strong automated test coverage, secret and dependency scanning tuned for AI outputs, pull request size limits, paired reviews for AI-heavy changes, training on AI failure modes, and incentives tied to quality rather than output volume. Without governance, AI amplifies risk along with speed.

Q: Will AI coding tools improve organizational productivity by 2026?

Likely — but conditionally. Evidence suggests gains will materialize where delivery metrics are instrumented, verification automation matures, and teams prioritize systems thinking over raw output. Organizations that optimize for typing speed alone will not see durable productivity gains.