AI coding has moved from curiosity to core developer tooling. In 2026, models and assistants are embedded in editors, CI/CD, and documentation workflows — and organizations now measure both the upside (time saved, throughput gains) and the downside (defects, security findings, governance needs).

This article covers adoption and daily usage, developer productivity and output, trust and code-quality tradeoffs, enterprise deployment signals, and market sizing. Key metrics include developer adoption rates, average hours saved, differential defect rates for AI-assisted code, GitHub Copilot reach, and market estimates from analyst firms.

AI Coding Key Insights & Takeaways

- 84% of developers say they use or plan to use AI tools in their development process.

- 51% of professional developers report using AI tools daily.

~3.6 hours per week — average time saved per developer using AI coding tools.

20M+ all-time users for GitHub Copilot (announced mid-2025).

~1.7× — AI-coauthored PRs show ~1.7× more issues than human-only PRs. - AI adoption: 91% across their sample of 135,000+ developers — indicating near-universal usage in many orgs.

- 22% of merged code was AI-authored.

- Daily AI users merge ~60% more PRs than light users.

- Gartner estimated the 2025 AI code-assistant market at $3.0–$3.5 billion.

- McKinsey’s 2025 global AI research highlights software engineering as one of the top functions to capture economic value from AI (≈25% of potential value in some models).

Top AI Coding Statistics Summary Table

| Metric | 2025–2026 figure |

| Developers using or planning to use AI tools | 84% |

| Professional developers using AI daily | 51% |

| Average time saved per developer/week | ~3.6 hours |

| Share of merged code AI-authored (DX sample) | 22% |

| AI-coauthored PRs — issues vs human PRs | ~1.7× more issues |

| GitHub Copilot all-time users (mid-2025) | 20M+ |

| Gartner 2025 AI code-assistant market estimate | $3.0–$3.5B |

| DX sample AI adoption (developers) | 91% |

Key headline statistics

• 84% of developers use or plan to use AI tools.

• 51% of professional developers use AI daily.

• ~3.6 hours/week saved on average per developer.

• 20M+ all-time users for GitHub Copilot.

• ~1.7× more issues in AI-coauthored PRs.

AI Coding Statistics: Deep Dive

1. AI Coding User Growth & Adoption Statistics

Developer adoption

Large developer surveys show near-universal awareness and high adoption:

- The Stack Overflow 2025 Developer Survey reports 84% of respondents are using or plan to use AI tools; 51% of professional developers use AI daily.

- This survey covers tens of thousands of responses and is a leading annual signal of developer behavior.

- The JetBrains State of Developer Ecosystem 2025 finds ~85% regular AI usage and that 62% rely on at least one coding assistant or agent, reinforcing the same widespread adoption pattern.

Measured usage

- Vendor and telemetry datasets show even higher penetration in active developer cohorts.

- DX’s Q4 2025 impact report (analysis of 135k+ developers) reports 91% AI adoption within their sample and 22% of merged code being AI-authored.

- These analytics help translate survey intent into measurable activity in repos and pipelines.

| Year | Representative adoption indicator |

| 2023 | Early mainstreaming; varying vendor signals |

| 2024 | 60–76% |

| 2025 | 84% using or planning to use |

| 2026 | Broad production use; daily usage ~51% among pros |

2. AI Coding Productivity & Financial Statistics

Time Savings And Throughput

- DX’s analysis across their 135k+ developer sample reports average 3.6 hours/week saved per developer when using AI coding tools; daily users show even larger gains and higher PR throughput (daily users merge ~60% more PRs).

- Stack Overflow’s behavioral data supports stronger use among early-career developers and measuring impact on learning velocity and ramp time.

Financial Proxies & ROI Signals

- Time saved compounds quickly in team budgets: 3.6 hours/week ≈ ~187 hours/year saved per developer (assuming 52 weeks).

3. AI Coding Assistant Statistics

- GitHub Copilot reported 20M+ all-time users as of mid-2025. Copilot represents one of the largest single-product footprints in the category.

- Market leadership and vendor rankings: Gartner’s 2025 Magic Quadrant for AI code assistants ranks GitHub and other major vendors as Leaders.

- Gartner estimated the 2025 AI code-assistant market at $3.0–$3.5B. This frames Copilot within a multi-vendor, competitive market with rapid product innovation.

- Multi-tool usage is common: JetBrains and other surveys report developers using multiple assistants, with variations by role and task (e.g., juniors use autocompletion and explanation more; seniors use generation for scaffolding).

GitHub Copilot Productivity Studies (Microsoft, GitHub, McKinsey)

Multiple independent studies have measured the productivity impact of AI coding assistants, particularly GitHub Copilot.

Microsoft & GitHub Research

GitHub and Microsoft conducted controlled developer productivity experiments and found:

- Developers completed tasks 55.8% faster using GitHub Copilot

- Developers using Copilot were 78% more likely to complete tasks successfully

- 88% of developers reported increased productivity

- 74% reported being able to focus on more satisfying work

- 87% reported less mental effort when coding

These findings come from GitHub’s developer productivity research conducted with real-world programming tasks.

GitHub Code Quality Study

GitHub also evaluated code correctness:

- Copilot-assisted developers were 53.2% more likely to pass all unit tests

- Copilot users wrote more comprehensive test coverage

- AI-assisted developers produced more maintainable code in structured tasks

This suggests AI improves both speed and code completeness.

McKinsey AI Productivity Research

McKinsey’s 2024–2025 AI research found:

- Software engineering is among the top 3 functions benefiting from AI

- AI coding assistants can improve developer productivity 20–45%

- AI-assisted development reduces onboarding time for junior developers

This reinforces AI coding assistants as one of the highest ROI enterprise AI deployments.

4. How Much Code Is Written by AI?

One of the biggest shifts in software development is not just how developers use AI — but how much code AI is actually writing.

Recent telemetry data from real-world repositories shows that AI is already responsible for a meaningful portion of production code.

- 22% of merged code is AI-authored (DX analysis of 135,000+ developers)

- Daily AI users merge ~60% more pull requests than light users

- 91% of developers in active repos use AI during development

- AI usage is highest for boilerplate, test generation, refactoring, and documentation

These numbers indicate that AI is no longer just assisting developers — it is actively producing a significant share of shipped software.

Where AI Writes the Most Code

Organizations report AI contributing heavily in specific development areas:

High AI Contribution Areas

- Boilerplate code generation

- Unit test creation

- API integrations

- Documentation generation

- Refactoring and modernization

- Debugging suggestions

Lower AI Contribution Areas

- Architecture decisions

- Security-critical logic

- Performance-sensitive systems

- Core business logic

This pattern reflects how teams currently treat AI as a coding accelerator, while developers remain responsible for design, validation, and critical logic.

AI Code Volume Is Growing Fast

The share of AI-written code has increased rapidly:

| Year | Estimated AI Code Share |

|---|---|

| 2022 | <5% (early experiments) |

| 2023 | 5–10% (Copilot adoption begins) |

| 2024 | 10–18% (enterprise rollout starts) |

| 2025 | ~22% merged code AI-authored |

| 2026 | Growing across production workflows |

This growth is driven by:

- Better models (GPT-4+, Gemini, Claude, etc.)

- IDE integration (VS Code, JetBrains, GitHub)

- Enterprise adoption and policy support

- Developer comfort with AI workflows

The Tradeoff: More Code, More Review Needed

While AI increases output, studies also show:

- AI-coauthored PRs have ~1.7× more issues

- Teams often under-review AI generated code

- Verification and testing become more important

This creates a new development dynamic:

AI increases speed → Teams must increase validation. Organizations that measure AI-written code percentage, track defect rates, and implement automated checks tend to see the best ROI.

AI Coding Assistant Performance Metrics (2026)

Recent 2025–2026 developer analytics reveal measurable performance gains from AI coding assistants.

Productivity Metrics

- ~3.6 hours saved per developer per week (DX 135k developer dataset)

- Daily AI users merge 60% more pull requests

- 55.8% faster task completion (GitHub study)

- 20–45% developer productivity improvement (McKinsey)

Code Output Metrics

- 22% of merged code AI-authored (DX telemetry)

- 91% of developers use AI in active repos

- AI users commit code more frequently

Quality & Risk Metrics

- AI-generated PRs show 1.7× more issues

- 96% of developers say they do not fully tst AI code

- 48% of developers always review AI code before merging

These metrics highlight the speed vs quality tradeoff in AI-assisted development.

5. AI Coding Enterprise Adoption Statistics

Fortune / Enterprise Penetration

- Public reporting and vendor commentary indicate very high enterprise penetration for AI coding tools.

- Multiple vendor statements and reporting suggest strong uptake among large enterprises and Fortune 100 companies.

- TechCrunch and vendor reports point to enterprise deployments at scale.

How Enterprises Use AI

- Enterprises typically deploy AI coding tools together with: access controls, logging, approved model policies, and CI policy gates — not simply open access.

- IDC and Gartner published guidance documents recommending governance, sandboxing, and risk assessments when deploying generative AI into developer environments.

Surveyed Enterprise Signals

- McKinsey’s 2025 surveys show that functions like software engineering represent a large share of potential AI-driven economic value (roughly ~25% in some sector breakdowns), which helps explain strong enterprise interest in tooling investments.

IDC Deployment Models for AI Code Assistants

IDC and enterprise AI guidance identifies three primary deployment models for AI coding assistants:

1. SaaS / Hosted Deployment

Most organizations deploy AI coding assistants as:

- GitHub Copilot SaaS

- Cloud-hosted AI assistants

- IDE-embedded AI services

Benefits:

- Fast rollout

- Minimal infrastructure

- Automatic updates

Tradeoffs:

- Data governance concerns

- Compliance limitations

2. Enterprise-Managed Deployment

Large organizations deploy AI coding assistants with:

- SSO integration

- Policy enforcement

- Logging and audit trails

- Access control

Benefits:

- Strong governance

- Security compliance

- Enterprise observability

This is the most common enterprise deployment model.

3. Private / Hybrid Deployment

Regulated industries often deploy:

- Private LLMs

- On-prem AI coding assistants

- Hybrid cloud models

Benefits:

- Full data control

- Compliance support

- Secure code environments

Industries using this model:

- Financial services

- Healthcare

- Government

- Defense

6. AI Coding Market & Competitive Statistics

Market Sizing & Growth

- Gartner estimated the AI code assistant market at $3.0–$3.5 billion in 2025.

- Other market research firms show similar coding-assistant segments within broader generative AI tool forecasts.

- Broader AI spending is far larger: Gartner forecasted ~$1.5 trillion worldwide AI spending in 2025 across infrastructure, software, and services.

- This indicates coding assistants are a growing slice of a very large market.

Competitive Landscape Representation

Below is a comparison of major platforms used for AI in coding, code generation, and automated development workflows. The table highlights representative reach, primary use cases, and notable enterprise features that influence adoption decisions.

| Platform / Vendor | Representative Users / Reach | Primary Use Cases | Notable Enterprise Features |

|---|---|---|---|

| GitHub Copilot | 20M+ all-time users (mid-2025) | AI code completion, pair-programming suggestions, function generation, debugging help | Enterprise policy controls, SSO integration, GitHub Enterprise compatibility, security scanning integrations |

| Amazon CodeWhisperer | Widely adopted across AWS developer ecosystem | AI code suggestions for cloud development, AWS SDK snippets, infrastructure automation code | IAM integration, enterprise licensing via AWS Marketplace, governance controls tied to AWS environments |

| Google Cloud Code / Gemini Code Assist | Used by developers building applications on Google Cloud | API integration code generation, cloud automation snippets, deployment helpers | Integrated with Google Cloud IAM, Cloud Build, and enterprise billing systems |

| GitLab AI (GitLab Duo) | Used within GitLab’s global DevOps platform user base | Merge request automation, CI/CD pipeline assistance, AI code suggestions | Built-in vulnerability scanning, audit logs, RBAC access control, enterprise DevSecOps workflows |

AI Coding Assistant Market Share (2025–2026)

While exact revenue market share is still emerging, developer usage surveys provide strong adoption indicators. Developers, according to a recent Stack Overflow usage share survey, used ChatGPT and GitHub Copilot primarily amongst the plethora of AI coding assistants.

Most Used AI Coding Assistants:

- ChatGPT — 81.7%

- GitHub Copilot — 67.9%

- Google Gemini — growing adoption

- Amazon CodeWhisperer — enterprise adoption

- GitLab Duo — DevOps ecosystem adoption

These numbers reflect developer usage share, which often precedes revenue share.

7. AI Coding Industry Trends & Broader Market Growth

Macro Trends

- McKinsey’s Global AI research and industry reports emphasize that capturing AI value requires process change — engineering organizations that combine adoption with governance and operating model changes capture more value.

- McKinsey highlights software engineering as a top function to benefit from AI.

- IDC and Gartner guidance emphasize toolchain integration, security scanning, and lifecycle management as strategic priorities for organizations scaling generative AI in development.

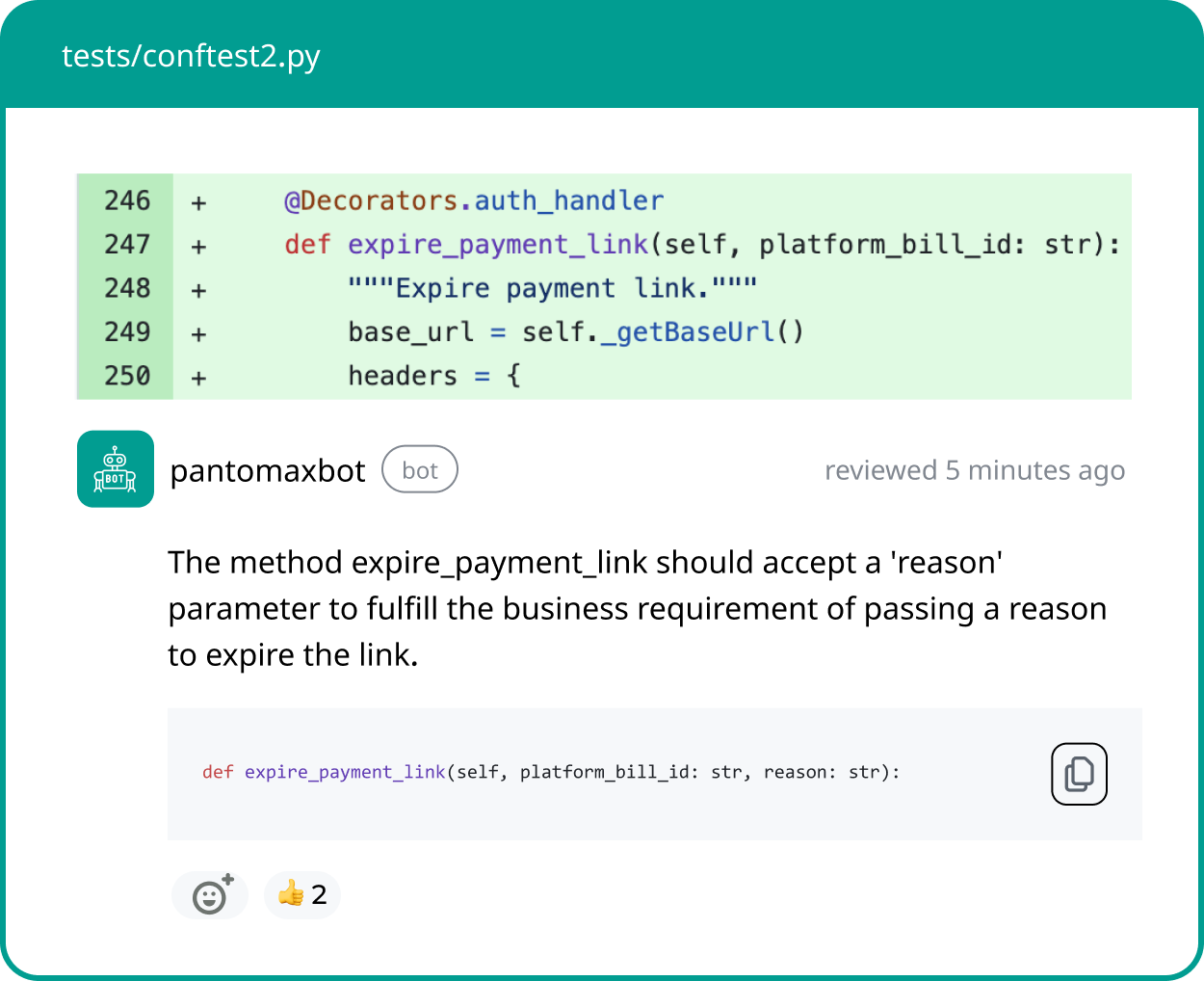

Risk Vs Reward Signals

- Independent code analyses, notably CodeRabbit’s December 2025 report, found ~1.7× more issues in AI-coauthored PRs. This is a clear signal that without new review patterns and automation, AI can increase review tails and defects.

- Other reporting shows uneven verification practices: industry coverage indicates many teams still under-review AI outputs, which creates verification debt and security risk.

- Recent articles and surveys suggest that a significant portion of developers do not always verify AI code prior to commit.

AI Code Assistant Tools Report (2026)

The AI coding assistant ecosystem has expanded rapidly between 2024 and 2026, with both large platform providers and specialized startups launching developer-focused AI tools.

Today’s AI code assistants range from IDE-native copilots to agent-based development tools and enterprise DevOps AI platforms.

AI Code Assistant Tools Comparison (2026)

| Tool | Company | Primary Use Case | Key Strength | Enterprise Adoption | Notable Features |

|---|---|---|---|---|---|

| GitHub Copilot | Microsoft / GitHub | Inline code generation | Market leader | Very High | IDE integration, enterprise controls |

| ChatGPT (Code Assistant) | OpenAI | Debugging, generation, explanation | Most widely used AI assistant | High | Multi-language, architecture help |

| Google Gemini Code Assist | Cloud & app development | Google Cloud integration | Growing | API generation, cloud automation | |

| Amazon CodeWhisperer | AWS | Cloud & AWS code generation | AWS ecosystem | High (AWS users) | IAM integration, security scanning |

| GitLab Duo | GitLab | DevOps + CI/CD automation | DevOps-native AI | Growing enterprise | Merge request automation |

| JetBrains AI Assistant | JetBrains | IDE-native coding AI | Deep IDE integration | Growing | IntelliJ, PyCharm integration |

| Cursor | Cursor.sh | Agent-based development | Fast adoption | Emerging | AI-first IDE |

| Codeium | Codeium | Free AI code completion | Rapid growth | Medium | Multi-IDE support |

| Sourcegraph Cody | Sourcegraph | Codebase understanding | Enterprise search AI | Enterprise adoption | Repo-wide context |

| Replit Ghostwriter | Replit | AI coding + deployment | Full-stack AI dev | Startup adoption | Instant deployment |

Most Common Multi-Tool AI Developer Stack (2026)

Many developers now use:

- GitHub Copilot — inline suggestions

- ChatGPT — debugging and architecture

- Cursor — large refactors

- GitLab AI — CI/CD automation

This layered AI workflow reflects how AI is becoming embedded across the entire software development lifecycle.

Recommendations & Action Checklist

- Tag and measure AI-assisted changes. Telemetry shows 22% of merged code can be AI-authored — measure this in your repos to understand exposure.

- Require automated security and policy gates. Recent surveys show higher issue rates in AI PRs; automated scanners can catch many classes of failures before review.

- Train developers on prompt & verification hygiene. Multiple reports signal wide adoption but mixed confidence; targeted enablement reduces error and improves ROI.

- Measure throughput and review time. Analytics for 2026 demonstrate daily AI users can merge ~60% more PRs — track throughput to validate value.

- Govern models & data access. IDC/Gartner recommend sandboxing and model governance for enterprise deployments.

Conclusion

By 2026, AI coding is no longer experimental — it’s embedded in the developer workflow and enterprise toolchains. Adoption is widespread (84%+), daily use is common (~51% among pros), and empirical analytics show material productivity gains (~3.6 hours/week and higher PR throughput for daily users).

At the same time, independent code analysis raises a clear caution: AI-assisted code can increase issue counts (~1.7×) and security findings if not paired with governance.

That combination — speed plus risk — defines the next phase. Organizations that treat AI outputs as draft material, apply automated checks, tag AI changes, and rework review and CI practices will keep speed without sacrificing quality.

Use the statistics above as your measurement baseline: instrument AI usage in your repos, measure throughput and defect rates, and iterate policies until you capture predictable ROI.

FAQ’s

Q: How many developers use AI coding?

A: Around 84% of developers report that they either use or plan to use AI development tools, according to the Stack Overflow 2025 developer survey. Among professional developers, approximately 51% say they use AI tools on a daily basis.

Q: How much time do AI tools save?

A: Analytics across more than 135,000 developers suggest that AI coding tools save an average of about 3.6 hours per week. Many organizations use this figure as a starting point when estimating productivity gains and building ROI models for AI-assisted development.

Q: Is AI-generated code safe to merge?

A: Not by default. Independent analysis from CodeRabbit found that pull requests containing AI-generated code had roughly 1.7× more issues than human-written code alone. For this reason, most teams combine AI assistance with human code review and automated security scanning before merging.

Q: How big is the AI code assistant market?

A: Gartner estimated the AI code assistant market at roughly $3.0–$3.5 billion in 2025. When considering the broader AI ecosystem—including infrastructure, software, and services—global AI spending is projected to reach approximately $1.5 trillion in 2025.

Q: Which tools lead adoption?

A: GitHub Copilot is widely considered a category leader, reporting more than 20 million users by mid-2025. Other major tools frequently appear in analyst reports and industry evaluations such as Gartner’s Magic Quadrant for AI developer platforms.