AI code review tools for GitLab merge requests help development teams catch bugs earlier, enforce security standards, and ship faster without sacrificing quality. As GitLab adoption continues to grow across mid-market and enterprise engineering teams, AI-powered code reviewers have become a core part of modern merge request workflows.

Unlike traditional manual reviews, AI code review for GitLab operates directly inside merge requests—analyzing code diffs, flagging issues, and providing inline feedback before human reviewers engage. The result is faster reviews, fewer defects reaching production, and reduced cognitive load for senior engineers.

This guide compares the best AI code review tools for GitLab in 2026, including native options, security-focused platforms, and context-aware AI reviewers.

What Is AI Code Review for GitLab?

AI code review for GitLab uses reinforcement learning and static analysis to automatically review merge requests. These tools analyze code changes, detect bugs and security vulnerabilities, enforce coding standards, and provide actionable feedback directly within GitLab before code is merged.

Most AI reviewers integrate through GitLab webhooks, CI/CD pipelines, or APIs, enabling them to:

- Comment inline on merge requests

- Block merges using quality gates

- Generate summaries and explanations

- Suggest or apply fixes automatically

Why AI Code Review Matters for GitLab Teams

Manual code reviews are essential but increasingly a bottleneck. As repositories grow and teams scale, reviewers spend disproportionate time on issues like formatting, repetitive bugs, and basic security checks.

AI-powered code review tools integrated with GitLab address this by:

- Automating routine checks

- Identifying vulnerabilities earlier

- Enforcing consistent standards across teams

Based on internal benchmarks and publicly shared case studies, teams report significantly shorter review cycles and higher defect detection rates when AI reviewers handle first-pass analysis.

Top AI Code Review Tools for GitLab Merge Requests

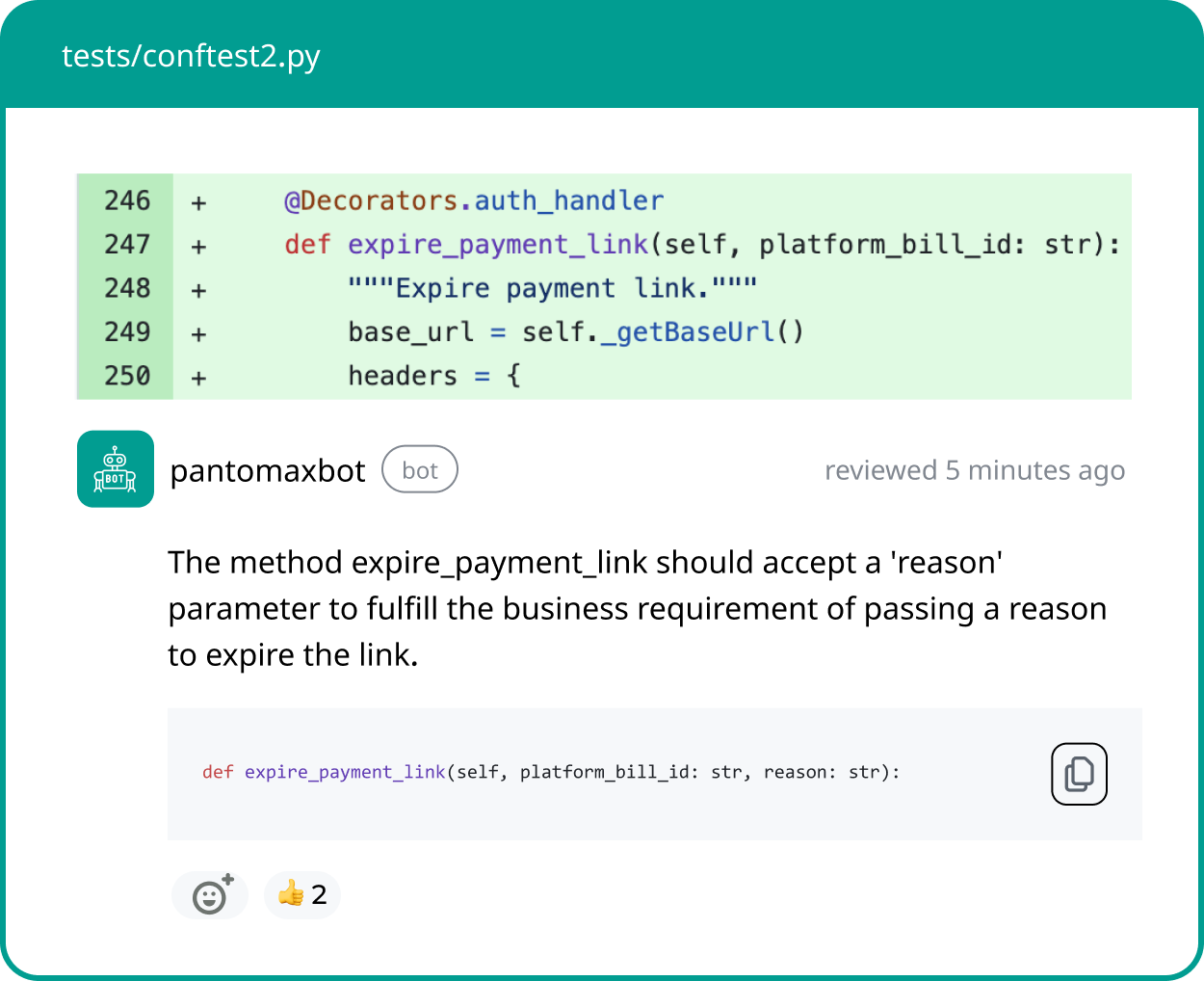

1. Panto AI

Panto AI is a context-driven AI code reviewer designed for GitLab teams that require alignment between business intent, security controls, and engineering execution across the SDLC.

Rather than analyzing diffs in isolation, it integrates with systems like Jira and Confluence to understand the rationale behind changes, delivering PR summaries, Q&A, and inline merge request feedback.

- Support for 30,000+ security rules across 30+ languages

- Cloud or on-premise deployment with zero code retention

- Automated or

/review-triggered merge request reviews

Panto AI is well suited for regulated industries and privacy-sensitive enterprise environments.

2. Greptile

Greptile analyzes repositories holistically by building a dependency graph that captures how changes propagate across services, modules, and architectural layers.

This approach enables detection of cross-cutting issues that diff-based reviewers miss, particularly in large monorepos and distributed systems.

- Repository-wide dependency and impact analysis

- Support for mainstream languages and monorepos

- SOC 2 Type II compliant with encrypted data handling

Greptile is a strong fit for enterprise teams managing complex, interconnected codebases.

3. CodeRabbit

CodeRabbit provides AI-powered GitLab merge request reviews focused on incremental, line-by-line feedback that evolves as commits are added.

Its conversational review model emphasizes developer experience, offering fast insights without introducing heavy configuration or workflow disruption.

- Inline, conversational feedback on specific code lines

- Automatic filtering of trivial or low-risk changes

- Suggested commits that can be applied directly

CodeRabbit works best for teams seeking rapid feedback with minimal operational overhead.

4. CodeAnt AI

CodeAnt AI combines AI-assisted code review with embedded security scanning optimized for GitLab-centric workflows.

It detects vulnerabilities such as SQL injection, leaked secrets, and unsafe dependencies, while also scoring repositories on overall code health.

- Automated detection of common security vulnerabilities

- Auto-fix capability for a significant portion of findings

- Code Health Scores covering security, code duplication, and complexity

CodeAnt AI is suitable for teams aiming to blend quality, security, and productivity metrics.

5. SonarQube

SonarQube is a mature and widely adopted code quality platform with deep roots in enterprise software governance.

Its GitLab integration enriches merge requests with quality gates, vulnerability reports, and maintainability insights.

- Deep static analysis across numerous languages

- Compliance, audit, and regulatory reporting capabilities

- Enforcement of merge-blocking quality thresholds

SonarQube remains a reliable choice for organizations with strict governance requirements.

6. Codacy

Codacy delivers automated code quality checks directly into GitLab merge requests using annotations, summaries, and pipeline status indicators.

Built on proven open-source analyzers, it supports a broad range of languages and integrates cleanly into CI/CD workflows.

- Support for 40+ programming languages

- Backed by tools such as ESLint, PMD, and Checkov

- Configurable analyzers and enforceable quality gates

Codacy is well suited for teams seeking standardized, automated quality enforcement.

7. Snyk (DeepCode AI)

Snyk’s DeepCode AI focuses on security-first code review, combining symbolic execution with AI trained on real-world vulnerability data.

It prioritizes findings based on exploitability and real risk, integrating into GitLab primarily through CI/CD pipelines.

- Reachability-based exploitability analysis

- Consideration of exploit maturity and package popularity

- Strong focus on application and dependency security

Snyk is ideal for teams where security risk reduction is the primary objective.

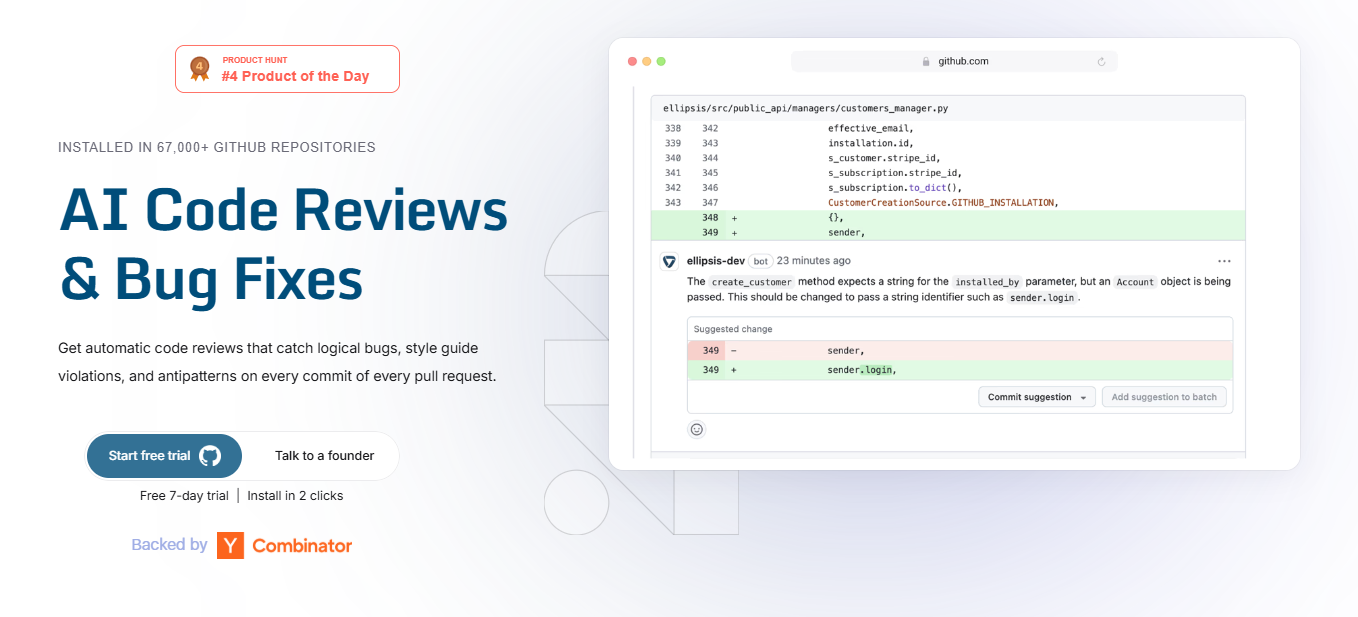

8. Ellipsis AI

Ellipsis AI automates bug detection and fix generation within GitLab repositories, responding intelligently to merge request comments.

It emphasizes control and safety, ensuring that code changes occur only when explicitly authorized by developers.

- Automated bug detection with generated fixes

- Interpretation of merge request comments and instructions

- No source code retention and explicit-approval-only changes

Ellipsis AI suits teams with strict governance and change-control policies.

9. Sourcery

Sourcery provides automated GitLab merge request reviews with a strong emphasis on Python code quality and refactoring.

Its feedback includes PR summaries, inline suggestions, and structural improvements tailored to Python best practices.

- Python-focused automated refactoring suggestions

- Inline feedback and review summaries

- Free for public repositories and GitLab self-hosting support

Sourcery is particularly attractive to Python-heavy and open-source teams.

10. Qodo Merge (formerly CodiumAI)

Qodo Merge is an open-source AI code review agent designed to integrate with GitLab via CI/CD pipelines or webhooks.

It offers structured reviews and automation features that can be customized through commands and labels.

- Open-source and self-managed deployment model

- Automated PR descriptions and test generation

- Highly configurable review behavior via labels and commands

Qodo Merge fits engineering-led teams comfortable managing their own tooling.

11. GitLab Duo: Native AI Code Review

GitLab Duo delivers AI-powered code review capabilities natively within the GitLab platform, requiring no external integrations.

It performs initial merge request reviews, generates PR summaries, and suggests improvements directly in the GitLab UI.

- Built-in AI code reviews and merge request summaries

- No third-party tools or data sharing required

- Minimal setup and tight GitLab integration

GitLab Duo is the most straightforward option for organizations prioritizing native functionality.

Quick Comparison: Top GitLab AI Code Reviewers

| Tool | Best For | GitLab Integration | Context Awareness | Security Strength | Self-Hosted |

|---|---|---|---|---|---|

| Panto AI | Business + security context | Native | Very High | Very Strong | Yes |

| Greptile | Monorepos, deep dependencies | Native | Full codebase | Medium | Yes |

| CodeRabbit | Lightweight GPT reviews | Native | Diff-based | Medium | Enterprise |

| CodeAnt AI | Security + auto-fix | Native | High | Strong | Yes |

| SonarQube | Enterprise static analysis | Native | Low | Very Strong | Yes |

| Snyk (DeepCode) | Security-first teams | CI/CD | High | Excellent | Yes |

| GitLab Duo | Native GitLab users | Native | Medium | Medium | Yes |

Recommendations by Team Type

- Small teams (5–15 developers): CodeRabbit, Sourcery

- Mid-market teams: Panto AI, CodeAnt AI

- Security-first organizations: Snyk, SonarQube

- Monorepos and complex architectures: Greptile

- Native GitLab users: GitLab Duo

Implementation Considerations

Integration Complexity

Most tools require a GitLab access token with API scope and webhook or CI/CD configuration. Teams using self-hosted GitLab should prioritize tools with on-premise deployment options.

Balancing Automation and Human Review

AI reviewers are most effective when handling routine checks, allowing human reviewers to focus on architecture and business logic. GitLab approval rules can enforce completion of both AI and human reviews.

Security and Compliance

For regulated environments, on-premise deployment and zero code retention policies are critical. Several tools reviewed above meet SOC 2 and enterprise compliance standards.

Choosing the Right AI Code Reviewer for GitLab

There is no single “best” AI code reviewer for GitLab—only the best fit for your team’s scale, security posture, and workflow maturity.

Teams prioritizing contextual understanding and security depth often choose Panto AI. Organizations needing full codebase awareness lean toward Greptile. Security-first teams gravitate to Snyk or SonarQube, while smaller teams benefit from lightweight tools like CodeRabbit.

AI code review for GitLab is no longer optional for teams shipping at scale. By integrating AI reviewers into merge request workflows, engineering teams reduce cycle time, improve code quality, and free senior developers to focus on higher-impact work.

FAQ’s

Q: What is AI code review for GitLab merge requests?

AI code review for :contentReference[oaicite:0]{index=0} automatically analyzes merge request diffs using static analysis, machine learning, and policy engines before human reviewers step in. These tools comment inline, flag security vulnerabilities, enforce coding standards, generate summaries, and optionally block merges using quality gates. The goal is to reduce manual review overhead while improving defect detection earlier in the SDLC.

Q: How does AI integrate with GitLab merge requests?

Most AI reviewers integrate via GitLab webhooks, API tokens, or CI/CD pipelines. Once connected, they analyze code changes during merge request creation or updates, post inline comments, generate PR summaries, and update pipeline status checks. Some tools operate natively inside GitLab, while others run as external services triggered during CI execution.

Q: Is GitLab Duo enough for enterprise-grade AI code review?

:contentReference[oaicite:1]{index=1} provides native AI-powered summaries and first-pass review capabilities directly within GitLab. It is suitable for teams prioritizing tight platform integration and minimal setup. However, enterprises with strict governance, advanced security requirements, or cross-system context needs may require specialized tools offering deeper rule coverage, repository-wide reasoning, or on-premise deployment controls.

Q: What makes Panto AI different from other GitLab AI reviewers?

:contentReference[oaicite:2]{index=2} emphasizes contextual review rather than diff-only analysis. It connects merge requests to business systems like Jira and Confluence to understand intent, applies extensive security rule coverage across multiple languages, and supports zero code retention with cloud or on-premise deployment. This makes it particularly suited for regulated or privacy-sensitive environments.

Q: Which GitLab AI code review tool is best for monorepos?

Tools that construct repository-wide dependency graphs are better suited for monorepos. For example, :contentReference[oaicite:3]{index=3} analyzes cross-service impact and architectural dependencies rather than isolated diffs. This allows detection of systemic risks that traditional line-by-line reviewers may miss.

Q: Are AI code review tools secure for proprietary repositories?

Security depends on deployment model and vendor policies. Many enterprise tools offer on-premise hosting, encrypted processing, SOC 2 compliance, and zero code retention guarantees. Teams should review data residency policies, logging practices, and model training disclosures before granting repository access. Free or cloud-only tools may require additional due diligence.

Q: Can AI code review tools block merges automatically?

Yes. Many platforms integrate with GitLab approval rules and CI/CD pipelines to enforce quality gates. If a tool detects critical vulnerabilities, policy violations, or failing quality thresholds, it can prevent merges until issues are resolved. This enables automated enforcement without removing human oversight.

Q: Do AI reviewers replace human code reviews?

No. AI reviewers are most effective as first-pass analyzers. They automate repetitive checks such as formatting, security scanning, and simple logic validation. Human reviewers remain essential for architectural decisions, domain correctness, and business logic validation. High-performing teams combine both layers.

Q: Which GitLab AI tool is best for security-first teams?

Security-focused teams often choose tools such as :contentReference[oaicite:4]{index=4} (DeepCode AI) or :contentReference[oaicite:5]{index=5}, which emphasize vulnerability detection, exploitability analysis, and compliance reporting. These tools integrate deeply into CI/CD pipelines and are optimized for reducing security risk rather than improving developer ergonomics alone.

Q: What should teams evaluate before choosing a GitLab AI reviewer?

Key evaluation criteria include deployment model (cloud vs. self-hosted), security rule coverage, repository-wide context awareness, merge-blocking capabilities, compliance certifications, and integration complexity. Teams should also assess whether the tool aligns with their primary objective: speed, governance, security hardening, or architectural consistency.

Q: Is AI code review for GitLab becoming standard practice?

Yes. As merge request volume increases and engineering teams scale, AI-assisted review is rapidly becoming part of baseline DevOps hygiene. Organizations shipping at scale increasingly treat AI review as a prerequisite for maintaining quality without overloading senior engineers.