Software defects are expensive at every stage of development, but in the context of preventive vs detective QA, the real cost comes from when defects escape into production.

That is why modern quality engineering is not just about finding bugs faster; it is about reducing the number of bugs created in the first place and catching the ones that still slip through.

This is where preventive and detective QA come in. Teams often use the terms loosely, or assume that automation alone covers both. It does not.

Preventive QA focuses on reducing defects before they are introduced, while detective QA focuses on identifying defects after they exist but before they impact users.

In this article, you will learn what each approach means, how they differ, where they fit in the CI delivery pipeline, which metrics matter, and how to build a balanced QA strategy that actually reduces production risk.

What is preventive QA?

Preventive QA includes the practices that reduce the chance of defects being introduced in the first place. The goal is not to “test harder later.” The goal is to improve the process so fewer defects make it into code, builds, or release candidates.

This is closely aligned with a shift-left mindset. Instead of waiting until the end of the cycle to inspect a product, teams move quality activities earlier into requirements, design, and implementation.

That can include code reviews, static analysis, acceptance criteria, and test design before development begins. When these habits are consistent, they prevent ambiguity, reduce rework, and improve shared understanding across product, engineering, and QA.

A few examples make this concrete.

- A strong code review can catch logic errors, missing null checks, security issues, or inconsistent handling before merge.

- Static analysis can flag risky patterns, dead code, or style and complexity issues that often correlate with bugs.

- Well-written acceptance criteria can prevent developers from building the wrong thing.

- Test design before implementation helps the team think through edge cases, failure paths, and expected behavior before the code is even written.

Preventive QA is not limited to “QA people.” It is a team discipline. Product managers, engineers, designers, and testers all contribute to it. The earlier a defect is prevented, the cheaper it is to fix and the less likely it is to impact customers.

What is detective QA?

Detective QA includes the practices that uncover defects after they already exist. These methods do not stop a bug from being introduced, but they increase the chances of finding it before users do.

This includes exploratory testing, automated regression checks, integration testing, monitoring, and defect triage. Detective QA is the safety net.

Even with strong prevention practices, defects still slip through because software systems are complex, requirements change, and humans make mistakes. Detective QA helps catch those issues while they are still manageable.

Exploratory debugging is especially valuable because it can expose problems that scripted checks miss. A tester may discover confusing workflows, broken assumptions, or unusual combinations of actions that were never covered in formal test cases.

Automated regression suites help detect when a previously working feature has broken after a new change. Integration checks reveal whether services, APIs, databases, or third-party systems still work together correctly.

Monitoring and alerting extend detection into production by identifying errors, latency spikes, failed jobs, or unusual user behavior after release.

Defect triage is also part of detective QA. Finding a defect is useful only if the team can classify it, prioritize it, assign ownership, and learn from it. Without triage, detection becomes noise instead of insight.

In short, detective QA is about visibility. It tells you what escaped your prevention layer and where the system is still vulnerable.

Preventive vs detective QA: side-by-side comparison

Preventive and detective QA are both essential, but they work in different ways. The easiest way to see the difference is to compare them directly.

| Aspect | Preventive QA | Detective QA |

|---|---|---|

| Purpose | Reduce defect creation | Find existing defects |

| Timing | Before or during development | During testing or after release |

| Examples | Code reviews, static analysis, acceptance criteria | Regression testing, exploratory testing, monitoring |

| Strengths | Reduces rework, improves clarity | Catches real-world issues, protects users |

| Limitations | Cannot eliminate all defects | Can detect issues late if overused |

| Best Use Cases | Complex logic, unclear requirements | Regression protection, production safety |

The biggest difference is not just timing. It is intent. Preventive QA tries to make defects less likely. Detective QA assumes some defects will still happen and builds a reliable way to find them.

Strong teams do not choose one or the other. They design a workflow where prevention reduces the load on detection, and detection validates what prevention cannot fully guarantee.

Why both are necessary

A healthy QA strategy needs both preventive and detective controls. Prevention lowers the number of defects entering the system. Detection catches what still slips through. Together, they create a much stronger quality posture than either approach alone.

This is especially important in real software environments where complexity keeps increasing. Teams ship faster, dependencies change more often, and users interact with products in ways that are hard to predict in planning meetings.

Even with excellent design, no team can fully eliminate defects before release. That means a purely preventive strategy will miss some risks.

On the other hand, a purely detective strategy pushes too much burden onto testing and production monitoring, which leads to late discovery and expensive fixes.

The practical logic is simple. Preventive QA reduces defect creation, which makes the system easier to test and support. Detective QA reduces defect escape, which protects users and gives the team feedback on what prevention missed. When a team uses both, the feedback loop becomes much stronger.

A defect caught in review can lead to better guidelines. A defect caught in regression can reveal a gap in acceptance criteria. A defect found in production can expose a weakness in design or test coverage.

That is why mature quality programs treat prevention and detection as complementary layers. Prevention handles the front door. Detection handles the back door. A strong system needs both secured.

Real-world examples in a software delivery pipeline

The best way to understand preventive and detective QA is to place them inside a typical software delivery pipeline.

1. Requirements review — preventive

This is one of the earliest opportunities to reduce defects. If requirements are vague, incomplete, or contradictory, the team is already at risk.

Reviewing requirements with product, engineering, and QA helps clarify acceptance criteria, edge cases, dependencies, and user expectations.

This is preventive because it reduces the chance of building the wrong feature.

2. Design review — preventive

Architecture and UX design reviews help catch issues before implementation starts.

A design review can reveal scalability concerns, security gaps, accessibility problems, or confusing user flows.

Like requirements review, this is preventive because it addresses defects before code is written.

3. Implementation — preventive and detective

During development, preventive QA includes practices such as code reviews, pair programming, and static analysis.

These reduce the number of defects introduced into the codebase. At the same time, unit tests and developer-run checks can be seen as early detection.

They help expose logic errors quickly, often within minutes of writing the code.

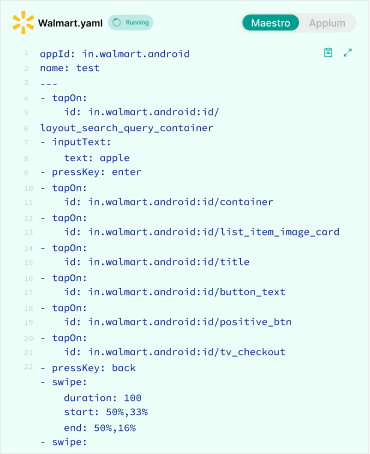

4. CI checks — detective

Continuous integration checks are classic detective controls. They validate whether the build compiles, tests pass, and key quality gates are met.

CI does not prevent a bug from being written, but it quickly detects breakage before merge or deployment.

5. Regression suite — detective

Regression testing is one of the clearest examples of detective QA. The purpose is to verify that new changes did not damage existing behavior.

A strong regression suite protects core workflows and reduces the risk of accidental breakage during frequent releases.

6. Production monitoring — detective

Observability, logging, alerting, and incident dashboards all belong here. Once software is live, monitoring can expose crashes, performance regressions, failed transactions, or unexpected user behavior.

This is detective QA in its most direct form: finding issues after release so they can be triaged and fixed.

Seen as a whole, the pipeline shows a pattern. Early stages are mostly preventive, later stages are mostly detective, and the best systems blend both throughout the journey.

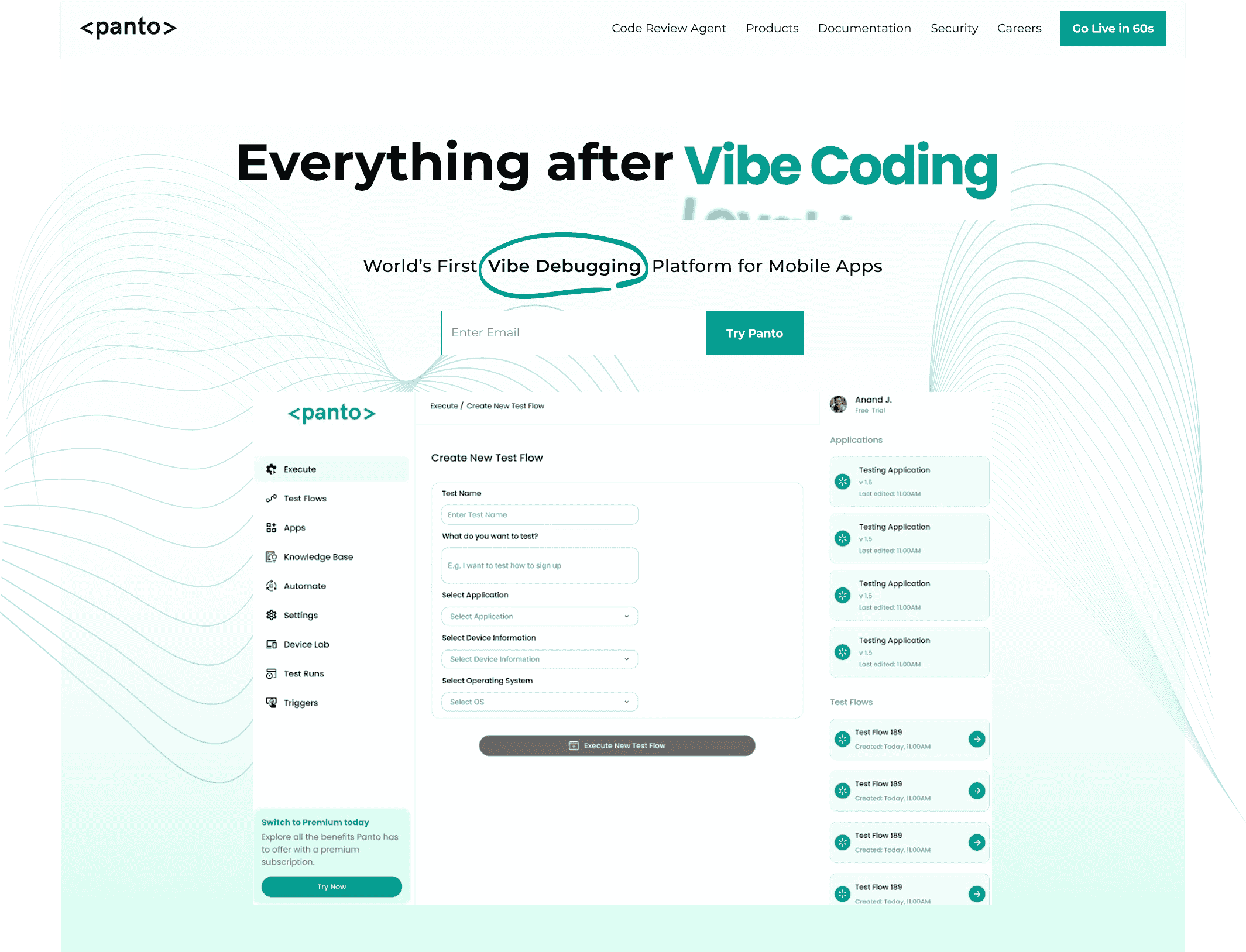

Everything After Vibe Coding

Panto AI helps developers find, explain, and fix bugs faster with AI-assisted QA—reducing downtime and preventing regressions.

- ✓ Explain bugs in natural language

- ✓ Create reproducible test scenarios in minutes

- ✓ Run scripts and track issues with zero AI hallucinations

How to balance preventive and detective QA by team size

The right balance depends on how mature the team is, how often it ships, and how much risk it carries.

Startups

Startups should focus on the highest-leverage preventive practices first: clear acceptance criteria, lightweight design review, code review, and a few critical tests around core user journeys.

They should also keep detective QA lean but effective, using smoke tests, a small regression set, and basic monitoring. The priority is speed with guardrails, not building an enormous testing layer too early.

Scaling teams

As teams grow, the cost of misalignment rises. At this stage, preventive QA should expand into more formal review practices, stronger static analysis, and better test design during planning.

Detective QA should also mature: broader regression coverage, integration testing, exploratory sessions, and production observability become more important. Scaling teams need consistency more than heroic effort.

Enterprise teams

Enterprise environments usually have more systems, more dependencies, and more compliance pressure. They need both deep prevention and robust detection.

Preventive QA may include architectural review boards, security review, formal acceptance processes, and risk-based test planning. Detective QA should include layered automated testing, environment monitoring, audit trails, and defect triage workflows.

The goal is not just quality, but traceability and resilience at scale. The pattern is clear: smaller teams should start with the essentials, while larger teams need a broader quality system that spans the full lifecycle.

Metrics that matter

A balanced QA strategy should be measured, not guessed. The right metrics help teams see whether prevention is reducing defect creation and whether detection is catching what still gets through.

1. Defect leakage

Defect leakage measures how many defects escape one phase and appear in a later phase, especially in production. High leakage often means preventive controls are weak or detective controls are too late.

2. Escaped defects

Escaped defects are bugs found by users or in production after release. This is one of the most important business-facing indicators of QA effectiveness. If escaped defects are high, the current strategy is not enough.

3. Change failure rate

This measures how often a deployment causes a failure that requires remediation, rollback, or hotfixing. It is a strong signal for release quality and release readiness.

4. Test flakiness

Flaky tests fail intermittently without a real product defect. High flakiness weakens detective QA because teams stop trusting the suite. It also creates noise that hides genuine issues.

5. MTTR

Mean time to recovery measures how quickly the team can restore service after a defect or incident. Strong monitoring and triage processes improve MTTR, even when prevention is not perfect.

6. Defect removal efficiency

This measures how effectively defects are removed before release compared with after release. It is a useful high-level indicator of whether the quality process is working upstream or merely shifting effort downstream.

These metrics are most valuable when viewed together. A drop in escaped defects is good, but only if it is not caused by slower delivery or excessive test maintenance. The goal is not a perfect number. The goal is a better system.

Common mistakes teams make

- Over-indexing on automation alone: Automated tests are valuable, but they are not a substitute for strong requirements, design reviews, or code reviews. Automation detects problems after they exist, it does not automatically prevent them from being introduced.

- Equating coverage with quality: High code coverage does not necessarily mean high quality. Teams can achieve impressive coverage numbers while still missing critical scenarios, edge cases, and integration failures that impact real users.

- Relying too heavily on late-stage testing: When defects are discovered late in the cycle, they become more expensive and disruptive to fix. While late detection is better than no detection, it should not replace early preventive practices.

- Skipping test design and review: Some teams move directly from requirements to execution without considering risks, boundaries, failure paths, and user expectations. Skipping this step weakens both preventive and detective QA efforts.

When to Use Preventive vs Detective QA

A practical way to think about preventive vs detective QA is not “which is better,” but where your current risk lies. Use the conditions below to decide where to invest.

1) If requirements are unclear → prioritize preventive QA

Signals:

- Frequent rework after development starts

- Misaligned expectations between product and engineering

- Features technically correct but functionally wrong

What to do:

- Strengthen acceptance criteria

- Add requirement and design reviews

- Introduce test design before implementation

Why it works:

Most defects here are created upstream, so detection later only increases cost.

2) If defects are escaping to production → strengthen both, start with detection

Signals:

- High escaped defects

- Customer-reported bugs

- Frequent hotfixes

What to do:

- Improve regression coverage

- Add production monitoring and alerting

- Run exploratory testing before release

Then:

- Backtrack root causes into preventive gaps

Why it works:

Detection acts as an immediate safety net, while prevention fixes the source over time.

3) If releases are frequent (CI/CD) → bias toward fast detection + lightweight prevention

Signals:

- Multiple deployments per day/week

- Heavy reliance on CI pipelines

- Short development cycles

What to do:

- Invest in fast, reliable automated regression

- Ensure CI checks are stable and meaningful

- Keep preventive practices lightweight but consistent (code reviews, clear criteria)

Why it works:

You need rapid feedback loops, not heavy upfront processes that slow delivery.

4) If development cycles are slow → increase preventive QA

Signals:

- Long QA cycles

- Late-stage defect discovery

- Large batch releases

What to do:

- Shift test design earlier

- Add stricter code reviews and static analysis

- Break features into smaller, testable units

Why it works:

Late detection is expensive in slow cycles. Prevention reduces downstream bottlenecks.

5) If systems are complex or high-risk → invest heavily in both

Signals:

- Distributed systems / microservices

- Financial, healthcare, or security-sensitive features

- High cost of failure

What to do:

- Preventive: architecture reviews, risk-based test design

- Detective: deep integration testing, monitoring, observability

Why it works:

Complex systems create unpredictable failure modes, so neither layer alone is sufficient.

6) If test suites are flaky or noisy → fix detection before scaling it

Signals:

- Intermittent test failures

- Low trust in automation

- Teams ignoring test results

What to do:

- Stabilize test environments

- Remove or fix flaky tests

- Improve signal-to-noise ratio

Why it works:

Broken detection systems reduce visibility, making both QA strategies ineffective.

7) If teams rely too much on testing alone → rebalance toward prevention

Signals:

- Heavy regression suites but recurring bugs

- High test coverage, low confidence

- QA bottlenecks before release

What to do:

- Move quality discussions earlier

- Improve requirement clarity

- Strengthen code review practices

Why it works:

Testing alone cannot prevent defects—it only detects them after creation.

A simple rule of thumb

- Preventive QA reduces defect creation

- Detective QA reduces defect escape

Quick decision matrix

| Situation | Priority |

|---|---|

| Unclear requirements | Preventive QA |

| Production bugs increasing | Detective → then Preventive |

| Fast CI/CD releases | Detective (fast feedback) |

| Slow release cycles | Preventive QA |

| High system complexity | Both equally |

| Flaky tests | Fix Detective QA first |

| Over-reliance on testing | Shift to Preventive QA |

Conclusion

Preventive QA reduces defect creation. Detective QA reduces defect escape. Strong teams do not treat them as competing strategies; they use them together.

A simple rule works well: prevent early, detect continuously, and learn from every escape. Start with preventive practices that improve clarity and reduce mistakes, then build detective layers that catch what still gets through.

That balance creates better software, safer releases, and less fire-fighting after launch.

FAQ’s

Q: What is the main difference between preventive and detective QA?

A: Preventive QA focuses on reducing defects before they are introduced into the system through practices such as requirement validation, design reviews, and code reviews. Detective QA, in contrast, identifies defects after they exist using testing, monitoring, and validation techniques before they reach end users.

Q: Is automated testing preventive or detective?

A: Most automated testing is detective in nature, particularly regression, integration, and CI-based test suites. However, automation can support prevention indirectly by providing fast feedback to developers during implementation, helping catch issues earlier in the development cycle.

Q: Which is more important: preventive or detective QA?

A: Both are essential. Preventive QA reduces the number of defects introduced, while detective QA ensures that remaining defects are identified before release. High-performing teams balance both approaches to achieve optimal quality and delivery speed.

Q: Where should a team start?

A: Teams should begin with foundational practices: clear and validated requirements, structured code reviews, well-defined acceptance criteria, a focused regression test suite, and reliable monitoring. From there, they can expand coverage based on system risk, complexity, and scale.