Continuous Integration was designed to accelerate delivery. Yet in large enterprises, CI pipelines often become the slowest part of the engineering lifecycle.

When test runtime expands beyond predictable limits, it impacts developer throughput, release cadence, and infrastructure cost. Over time, slow pipelines erode trust in automation itself.

For QA leaders, CI test runtime is not merely a performance metric. It is a systems-level constraint on organizational velocity.

What is CI Runtime?

Evolution of CI Runtime

Continuous Integration originally emerged as a build verification mechanism rather than a comprehensive validation system.

In early CI implementations, pipelines primarily compiled code and executed small unit test suites.

Runtime remained short because application architectures were relatively monolithic, dependencies were limited, and infrastructure provisioning was minimal.

Over time, several industry shifts transformed CI runtime characteristics:

- Containerization introduced reproducible but heavier environments

- Microservices increased integration surface area

- Test automation expanded across UI, API, and contract layers

- Cloud infrastructure enabled larger but more complex pipelines

As organizations scaled engineering teams and systems, CI pipelines gradually absorbed responsibilities once handled manually or post-release. The result was predictable: runtime expanded alongside confidence requirements.

Modern CI pipelines therefore represent not just build validation, but a compressed simulation of production behavior — making runtime growth a structural outcome rather than an anomaly.

Understanding the Components of CI Runtime

CI test runtime is rarely caused by one issue. It is typically an aggregation of delays across multiple layers of the pipeline.

A useful decomposition model looks like this:

| Component | What It Includes | Typical Enterprise Impact |

|---|---|---|

| Queue Time | Waiting for runners | High during peak commits |

| Environment Provisioning | Container or VM startup | Slower with heavy images |

| Dependency Resolution | Installing packages | Redundant installs inflate runtime |

| Test Execution | Actual test logic | Largest runtime contributor |

| Artifact Upload | Logs, reports, binaries | Network and storage dependent |

Without decomposing runtime, optimization efforts become guesswork.

The Throughput Cost of Slow CI

In enterprise environments, CI runs can exceed 30–60 minutes for full regression suites.

Consider a team running 150 CI pipelines per day. A 10-minute excess runtime per pipeline results in:

- 1,500 minutes lost daily

- 25 hours of pipeline latency

- Delayed merges and blocked releases

At scale, this compounds into measurable delivery drag.

Why Enterprise Test Suites Grow Unbounded

Test runtime inflation is usually gradual.

Common causes include:

- Accumulation of end-to-end (E2E) tests

- Duplicate regression coverage

- Flaky test retries

- Expanding integration layers

- Environment setup complexity

Over time, optimization becomes reactive instead of architectural.

The Maturity Gap in CI Governance

Many enterprises monitor test pass/fail rates but do not monitor runtime trends.

High-maturity teams track:

- Average test duration

- 95th percentile runtime

- Queue wait time

- Cost per CI run

- Flake rate vs runtime correlation

Runtime governance prevents silent performance regression.

The Speed–Confidence Tradeoff in CI Testing

Reducing CI runtime is not purely an optimization problem. It is a balance between feedback speed and validation confidence.

Every additional test increases defect detection probability, but also introduces execution cost. Beyond a certain threshold, incremental confidence gains diminish while runtime increases linearly or worse.

This creates an inherent tradeoff:

| Optimization Strategy | Potential Risk |

|---|---|

| Selective test execution | Undetected regressions |

| Heavy mocking | Integration blind spots |

| Aggressive parallelization | Hidden concurrency issues |

| Reduced E2E coverage | Workflow validation gaps |

High-performing teams do not aim for the fastest possible CI pipeline. Instead, they aim for optimal confidence per minute of runtime.

Understanding this tradeoff reframes optimization from speed maximization to validation efficiency.

Proven Strategies to Reduce CI Test Runtime at Scale

Reducing CI test runtime requires layered intervention. Quick fixes rarely sustain improvements at enterprise scale.

The Four Dimensions of CI Runtime Health

Runtime alone does not fully describe pipeline performance. Mature organizations evaluate CI health across four dimensions:

1. Speed

Average pipeline duration and developer feedback latency.

2. Predictability

Variance between runs. Highly variable pipelines reduce planning confidence even when averages appear acceptable.

3. Efficiency

Infrastructure cost relative to validation value delivered.

4. Reliability

Runtime impact caused by flaky tests, retries, or environmental instability.

A pipeline that is fast but unpredictable — or cheap but unreliable — still constrains delivery velocity. Balanced optimization across all four dimensions produces sustainable improvements.

The following strategies are organized by optimization maturity.

Foundational Efficiency Improvements

These are baseline practices every enterprise CI system should implement.

1. Parallel Test Execution

Parallelization distributes test execution across multiple workers.

If 1,200 tests take 40 minutes sequentially, splitting them across 8 workers can reduce runtime to approximately 6–8 minutes, depending on I/O constraints.

Key considerations:

- Ensure tests are isolated

- Remove shared state

- Avoid inter-test dependencies

Parallelization amplifies architectural weaknesses if isolation is poor.

2. Deterministic Test Environments

Non-deterministic environments introduce retries and flakiness, inflating runtime.

Best practices include:

- Immutable container images

- Fixed dependency versions

- Reproducible database seeds

- Time and timezone normalization

Determinism reduces both runtime variance and debugging cycles.

3. Dependency Caching

Repeated dependency installation is a common runtime drain.

Effective caching reduces redundant work:

- Package manager caches

- Docker layer caching

- Build artifact reuse

- Language runtime caching

Platforms such as GitHub Actions, Jenkins, and GitLab CI/CD support advanced caching strategies.

Caching should be version-aware to prevent stale artifacts.

4. Incremental and Selective Test Runs

Running the entire regression suite for every commit is often unnecessary.

Change-based test selection executes only relevant tests based on:

- Modified files

- Dependency graphs

- Impact analysis

Trade-off considerations:

| Benefit | Risk | Mitigation |

|---|---|---|

| Faster feedback | Missed regression | Periodic full-suite runs |

| Reduced compute cost | False confidence | Impact analysis validation |

Selective debugging requires disciplined test mapping.

Scaling Strategies for Large Test Suites

When test counts exceed several thousand, foundational techniques alone are insufficient.

1. Intelligent Test Sharding

Static test splitting can create uneven workloads.

Intelligent sharding distributes tests based on historical execution time rather than test count.

Example:

| Worker | Tests Assigned | Expected Runtime |

|---|---|---|

| Worker 1 | 150 | 7.2 min |

| Worker 2 | 120 | 7.1 min |

| Worker 3 | 160 | 7.3 min |

Balanced shards reduce idle runner time and improve overall efficiency.

2. Flaky Test Quarantine with SLA Tracking

Flaky tests inflate runtime through retries and reruns.

A structured quarantine policy:

- Automatically isolates flaky tests

- Runs them separately

- Tracks resolution SLA

- Prevents regression suite contamination

Important governance principle: quarantine should be temporary, not permanent exclusion.

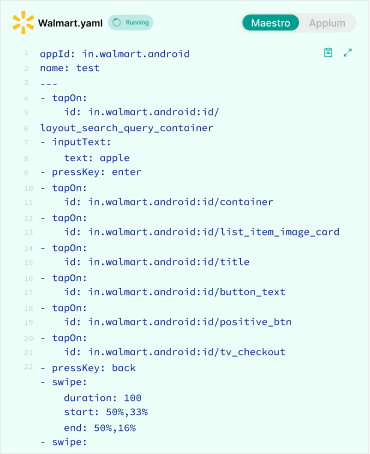

3. Reducing Over-Reliance on End-to-End Tests

E2E tests are resource-intensive.

Enterprises often accumulate excessive E2E coverage because it feels safer.

Optimization approach:

- Shift logic validation to unit and contract tests

- Reduce redundant UI-level flows

- Replace broad regression scripts with targeted, automated scripts

Architectural distribution of coverage reduces runtime significantly.

4. Service Virtualization

External dependencies increase test latency.

Service virtualization replaces real integrations with simulated services.

Benefits include:

- Reduced network latency and increases test execution speed.

- Elimination of third-party rate limits

- Predictable response times

This stabilizes both runtime and reliability.

Infrastructure-Level Optimization

Pipeline runtime is constrained not only by test logic but by infrastructure architecture.

1. Runner Sizing and Resource Allocation

Undersized runners create CPU and memory contention.

Monitoring metrics to review:

- CPU utilization

- Memory pressure

- Disk I/O

- Network bandwidth

Scaling runners vertically may yield immediate runtime reduction.

2. Container Optimization

Heavy container images slow environment provisioning.

Optimization steps:

- Use minimal base images

- Remove unused packages

- Reduce image layers

- Pre-bake dependencies

Smaller images accelerate startup time and reduce caching overhead.

3. Autoscaling Strategy

Static runner pools cause queue buildup during commit spikes.

Autoscaling runners dynamically adjust capacity based on demand.

Key parameters:

- Scale-up threshold

- Cool-down window

- Maximum concurrency limit

Well-configured autoscaling reduces queue time without inflating pricing and cost.

Reducing Waste in CI Pipelines

Optimization is not only about acceleration. It is about eliminating non-value work.

1. Removing Redundant Test Layers

Overlapping coverage between unit, integration, and E2E tests increases runtime without increasing defect detection probability.

Periodic coverage audits help identify redundancy.

2. Optimizing Test Setup and Teardown

Slow setup routines often dominate runtime.

Common inefficiencies include:

- Full database rebuild per test

- Repeated authentication flows

- Large fixture loading

Techniques to reduce overhead:

- Shared read-only datasets

- Snapshot-based database resets

- Token reuse mechanisms

3. Artifact Management Optimization

Uploading excessive logs and artifacts slows CI completion.

Best practices:

- Upload artifacts conditionally on failure

- Compress logs

- Retain only critical diagnostics

Storage efficiency improves end-to-end cycle time.

Observability and Continuous Runtime Governance

Sustainable runtime reduction requires measurement discipline.

1. Runtime Trend Monitoring

Track runtime metrics over time:

- Average duration

- 95th percentile

- Max duration

- Standard deviation

Spikes often correlate with new test additions or dependency changes.

2. Cost Per CI Run Analysis

Enterprise leaders should quantify:

| Metric | Example |

|---|---|

| Average runtime | 22 minutes |

| Cost per minute | $0.08 |

| Cost per run | $1.76 |

| Daily runs | 200 |

| Daily CI cost | $352 |

Runtime reduction directly reduces infrastructure expenditure.

3. Feedback Cycle Compression

Shorter CI runtime reduces developer context switching.

Benefits include:

- Faster merge approvals

- Reduced rebase conflicts

- Shorter feature branch lifetime

- Improved release predictability

Pipeline efficiency improves engineering morale and stability.

How CI Runtime Shapes Engineering Behavior

CI runtime influences developer behavior more than most organizations realize.

When feedback cycles lengthen, engineers unconsciously adapt their workflows:

- Commits become larger to avoid repeated waits

- Developers delay pushes until “ready,” reducing incremental validation

- Feature branches live longer, increasing merge complexity

- Teams rely on local testing assumptions rather than shared automation

These behavioral adaptations introduce secondary risks unrelated to tooling itself.

Long CI pipelines can therefore create organizational friction even when technically reliable. Conversely, fast and predictable pipelines encourage smaller changes, faster iteration, and safer deployments.

CI runtime should therefore be viewed as both a technical metric and a behavioral influence within engineering systems.

Why CI Runtime Optimization Efforts Often Stall

Many optimization initiatives produce temporary gains but fail to sustain improvement.

Common reasons include:

- Optimizing test execution while ignoring queue time

- Increasing parallel workers without addressing shared bottlenecks

- Treating flakiness as a quality issue rather than a runtime multiplier

- Lack of ownership for CI performance

- Absence of runtime benchmarks or service-level expectations

Without governance, runtime gradually regresses as new tests and dependencies accumulate.

Sustained improvement requires treating CI runtime as an evolving engineering system rather than a one-time efficiency project.

The Future of CI Runtime Management

CI runtime management is evolving from reactive optimization toward predictive orchestration.

Emerging practices include:

- Runtime prediction using historical execution data

- Dynamic test prioritization based on change risk

- Ephemeral environments created per workflow

- Continuous test impact analysis

- Automated detection of runtime regressions

As pipelines grow more complex, manual optimization becomes insufficient. Future CI systems increasingly emphasize adaptive execution — deciding not only how tests run, but which tests should run at all.

Organizations that adopt measurement-driven runtime governance early are better positioned to scale engineering throughput without proportional increases in infrastructure cost.

Mental Models for Understanding CI Runtime Growth

Several conceptual models help explain why runtime expands over time:

Runtime behaves like technical debt.

Each added test introduces marginal cost that compounds silently.

Confidence scales sublinearly while execution cost scales linearly.

More tests do not increase quality assurance proportionally.

CI pipelines mirror system complexity.

Slow pipelines often reflect architectural coupling rather than tooling inefficiency.

Viewing runtime through these models helps teams diagnose root causes instead of applying surface-level optimizations.

Common Mistakes When Optimizing CI Runtime

Enterprises frequently make avoidable errors:

- Adding more parallel workers without addressing bottlenecks

- Ignoring flaky tests while focusing only on speed

- Optimizing test execution but not queue time

- Removing tests without coverage validation

- Failing to monitor long-term regression

Optimization must be systematic rather than reactive.

A Practical Implementation Roadmap

For enterprise QA teams, a phased approach is recommended.

1. Baseline Measurement

- Decompose runtime

- Identify dominant contributors

- Establish performance benchmarks

2. Quick Efficiency Gains

- Enable caching

- Introduce parallelization

- Optimize container images

3. Structural Improvements

- Implement intelligent sharding

- Reduce E2E dependency

- Introduce virtualization

Phase 4: Governance

- Track runtime trends

- Define SLA for flake resolution

- Review cost metrics quarterly

Continuous monitoring prevents runtime relapse.

Conclusion

Reducing CI test runtime in enterprise environments is not a one-time optimization. It is an ongoing engineering discipline.

The objective is not merely faster builds. It is predictable, reliable, and cost-efficient feedback cycles.

By decomposing runtime, applying layered optimizations, and instituting governance mechanisms, QA leaders can transform CI from a bottleneck into a throughput accelerator.

In high-scale environments, disciplined CI runtime management becomes a competitive advantage rather than a maintenance task.