High test coverage looks reassuring on a dashboard. It suggests the team is writing tests, protecting the codebase, and catching regressions before they ship.

But coverage can create a dangerous illusion: a project can show impressive numbers and still release broken features, customer-facing defects, and costly production incidents.

The reason is simple. Coverage measures execution, not correctness.

That does not mean test coverage is useless. It absolutely has value. Coverage helps teams identify untested code, encourages discipline, and provides a rough signal of test completeness.

But coverage is only one signal. To build software that actually holds up in production, teams need to understand what coverage measures, where it falls short, and which metrics tell a more accurate story.

What test coverage actually measures

The term test coverage is often used broadly, but in practice it usually refers to code coverage: how much of the source code is exercised by tests.

Depending on the tool, that may include line coverage, statement coverage, branch coverage, or path coverage.

- Line coverage is the most common. If a line of code runs during a test, it counts as covered.

- Statement coverage is similar, but measured at the statement level.

- Branch coverage goes deeper by checking whether both sides of conditionals are exercised, such as the

ifandelsepaths. - Path coverage attempts to account for combinations of execution routes through the code.

| Type | What It Covers | Limitation |

|---|---|---|

| Line coverage | Lines executed | No validation of correctness |

| Statement coverage | Statements executed | Similar to line coverage |

| Branch coverage | Conditional paths | Misses data combinations |

| Path coverage | Execution paths | Not scalable in real systems |

These metrics are useful, but they answer a narrow question: did the test execute this code?

They do not answer the more important questions:

- Did the test validate the correct business rule?

- Did it catch the wrong output?

- Did it simulate realistic input?

- Did it include failure states?

- Did it protect the integration between services?

- Did it reflect what a user actually does?

A test can execute a line and still be worthless if it contains no meaningful assertion. It can cover a branch and still miss the bad data combination that triggers the bug. It can achieve 95% coverage and still leave critical workflows exposed.

That is why teams that chase coverage percentages alone often end up with tests that look complete on paper but fail in practice.

Coverage vs. test effectiveness

| Dimension | Code Coverage | Test Effectiveness |

|---|---|---|

| What it measures | Code execution | Risk reduction |

| Focus | Lines, branches, paths | User outcomes and behavior |

| Guarantees correctness | No | Yes (if well-designed) |

| Detects integration issues | Rarely | Yes |

| Reflects real-world usage | No | Yes |

| Easy to game | Yes | Much harder |

| Business relevance | Indirect | Direct |

Coverage and effectiveness are not the same thing.

Coverage asks: Was the code executed?

Effectiveness asks: Did the test reduce the risk of failure?

That distinction matters. A test suite can be technically complete and strategically weak. It can touch most of the codebase while still missing the behaviors that break the product in the real world. If a test does not prove something meaningful about the product, then execution alone is not enough.

Why high coverage still misses bugs

| Blind Spot | What Coverage Shows | What Actually Breaks |

|---|---|---|

| Weak assertions | Code executed | Incorrect output passes |

| Data combinations | Branches covered | Edge-case inputs fail |

| Integrations | Units tested | Services don’t work together |

| Environment differences | Tests pass locally | Fails in production |

| Edge cases | Happy paths covered | Real user behavior breaks |

| UI & devices | Components render | Layout/UX issues |

1. Tests can execute code without asserting the right outcome

One of the most common coverage traps is assuming that execution equals validation. A test may call the right function, hit the right branch, and still miss the actual defect because the assertions are too weak.

For example, a test might check only that a payment function returns something truthy. That does not prove the amount charged is correct, the currency is correct, the receipt was generated, or the downstream payment gateway received the right payload.

This happens when tests are written to satisfy coverage goals rather than to defend user outcomes. A suite full of shallow assertions can produce a comfortable number while leaving serious bugs untouched.

The deeper issue is that code coverage is easy to game. It rewards execution, not intent. A test that touches a line counts the same as a test that thoroughly validates a rule. That makes it a poor proxy for quality unless it is paired with stronger behavioral checks.

2. Branch and path coverage can still miss bad data combinations

Even when teams move beyond line coverage, branch coverage can still hide blind spots. Hitting both sides of an if statement does not mean you have tested the right inputs, the right data shape, or the combination that matters in production.

A function might handle valid and invalid inputs, but only fail when a specific combination appears: a null value plus a timezone offset, a discounted item plus a partial refund, or an empty list plus a specific user role. Branch coverage may show both conditions were tested, yet the bug can still hide in the interaction between variables.

Path coverage sounds more comprehensive, but in real systems the number of possible paths explodes quickly. Most teams cannot test every route through meaningful business logic, especially when feature flags, external dependencies, and user states are involved. So even high branch coverage can give a false sense of completeness.

This is why teams need to think in terms of risk and behavior, not just code structure. The bug usually lives in the combination, not the isolated branch.

3. Integration faults live between components

Many bugs do not live inside a single function at all. They appear in the seams between services, modules, or layers of the application. Unit tests can be excellent at checking isolated logic, but they often miss the failure that happens when components interact.

A classic example is an API that returns a field named one way while the frontend expects it another way. Each side may be fully covered by tests. The backend test validates the response object. The frontend test validates the rendering logic. But the integration between them breaks because the contract drifted.

The same issue appears with authentication tokens, third-party SDKs, webhooks, queues, databases, or payment providers. A test that mocks the dependency can still pass even if the real integration fails under production conditions.

Coverage tends to be strongest in isolated code and weakest at the boundaries where the system actually fails. That is one reason integration tests, contract tests, and end-to-end tests matter so much. They cover the space where unit-level coverage cannot reach.

4. Environment and configuration issues are invisible to code coverage

A large share of production bugs are not caused by logic errors at all. They come from environment differences: missing environment variables, stale configuration, file permissions, timezone settings, feature flags, deployment differences, browser quirks, or infrastructure mismatches.

Code coverage cannot tell you whether your app works when the API URL is wrong, the database is slow, a secret expires, or a feature flag is toggled unexpectedly. It also cannot detect whether your staging environment behaves differently from production.

This matters because many systems only fail when code meets reality. A feature may pass every test locally, yet break when deployed to a different cloud region, a specific OS version, or a production browser. Coverage does not measure those risks.

Teams that rely too heavily on code coverage often underinvest in environment parity, deployment validation, and configuration testing. That is a gap no percentage can reveal.

5. Edge cases and negative paths are often under-modeled

A test suite built around happy-path workflows can achieve strong coverage while still ignoring the cases that users actually trigger in the wild. Real users make mistakes, enter incomplete data, disconnect networks, retry actions, switch devices, and abandon flows midway. Bugs often hide in these messy edges.

Negative testing is especially important. What happens when a field is empty? What if a string is too long? What if an API returns a 500? What if the cart is empty, the session has expired, or the invoice is already paid? What if a file upload is interrupted halfway through?

Coverage numbers can rise even when the suite fails to model these scenarios. A test can exercise a form component and still never verify validation failures. It can call a service and still never prove the retry logic works. It can cover the function and still miss the behavior users experience when things go wrong.

6. UI, visual, and device-specific bugs slip past code-centric metrics

Not all bugs are logical. Some are visual, responsive, or device-specific. A button may be covered by tests and still be hidden under a sticky footer on mobile. A layout may break on a smaller screen. A modal may render correctly in one browser and fail in another. A date picker may work in Chromium but misbehave in Safari. A font change may cause text clipping, overflow, or misalignment.

These defects usually do not show up in code coverage at all. A component test may confirm the element exists, but not that it is visible, readable, tappable, or correctly positioned under real conditions.

This is especially important for teams building customer-facing products where user experience matters as much as backend correctness. Code coverage can say the component ran. It cannot say the interface is usable. That is why visual checks, browser coverage, device coverage, and accessibility testing are such important complements to functional tests.

Real-world examples of bugs coverage misses

Here are a few simple examples that show how high coverage can still miss critical failures.

- A checkout flow may have excellent unit test coverage, but still fail when a discount code interacts with a partially refunded cart. The core pricing function is covered, but the edge case between discount logic and refund logic is not.

- A scheduling app may cover its date functions thoroughly, yet still display the wrong appointment time for users in another timezone. The code runs, but the test never validated timezone conversion for the actual user locale.

- A profile form may show high coverage because every validation function is tested, but the app still crashes on an empty optional field. The tests covered valid strings and obvious invalid input, but not the empty-state path.

- A backend process may work in isolation, but fail under load because two requests race each other and update the same record. Unit coverage says the function works. Production says the concurrency assumption was wrong.

- An API contract may pass all service tests, yet the frontend breaks because the response field name changed from

user_nametousername. Each side is covered, but the contract between them is not. - A responsive product page may pass component tests and still show a broken grid on a specific mobile device. The markup is fine. The rendering environment is not.

These are exactly the kinds of defects that make coverage a partial metric, not a complete one.

What teams should measure instead

Instead of treating coverage percentage as the main success signal, teams should measure test effectiveness. That means asking whether the test strategy is actually reducing the risk of defects, not just inflating a number.

| Metric | What It Tells You | Why It Matters |

|---|---|---|

| User-flow coverage | Critical journeys tested | Aligns testing with real usage |

| Risk-based coverage | High-impact areas tested | Prevents costly failures |

| Regression escape rate | Bugs reaching production | Measures test effectiveness directly |

| Flaky test rate | Test reliability | Indicates trustworthiness of suite |

| Defect detection rate | Bugs caught pre-release | Shows testing quality |

| Incident rate | Production failures | Connects testing to business impact |

A better set of metrics includes:

- Requirement or user-flow coverage

Are the most important user journeys covered by tests? Do the tests reflect actual product behavior and business priorities? - Branch and path coverage where relevant

Not every project needs extreme path coverage, but critical logic should be explored beyond line execution. - Risk-based coverage

Are the highest-impact, highest-change, or most failure-prone areas receiving the most testing attention? - Regression escape rate

How many bugs are still reaching production after being introduced in previously tested areas? - Flaky test rate

If tests are unstable, the suite may be generating noise rather than confidence. - Defect detection rate

How many defects are being found before release versus after release? - Production incident reduction

Are tests actually helping reduce customer-impacting failures over time?

These QA metrics connect testing to business outcomes. They do not replace coverage entirely, but they put it in context. A team with slightly lower coverage and far fewer escaped defects is doing better than a team with a high percentage and frequent production bugs.

How to improve coverage without chasing vanity metrics

Improving test quality does not mean abandoning coverage. It means using coverage more intelligently. A healthy test strategy is one that reveals risk early.

- Start by mapping tests to critical user journeys. Focus on the paths that matter most to revenue, retention, or trust. If a workflow is central to the product, it deserves strong validation from multiple angles.

- Add assertions for business rules, not just function calls. A test should verify the output that matters, the state change that matters, or the side effect that matters. Otherwise, you may be debugging without meaning.

- Include negative cases and boundary values. Test empty inputs, maximum lengths, invalid states, rejected permissions, expired sessions, and downstream failures. Bugs often appear where assumptions fail.

- Test integrations and failure states. Mocking has a place, but it should not be the whole strategy. Validate API contracts, external dependencies, and retry logic with realistic scenarios.

- Expand coverage across devices, browsers, data sets, and environments. The more user diversity your product has, the more important it is to test beyond a single local setup.

- Review tests for blind spots, not just percentages. Ask what the suite is not proving. What assumptions are unchallenged? What user behavior is missing? What dependency is unverified?

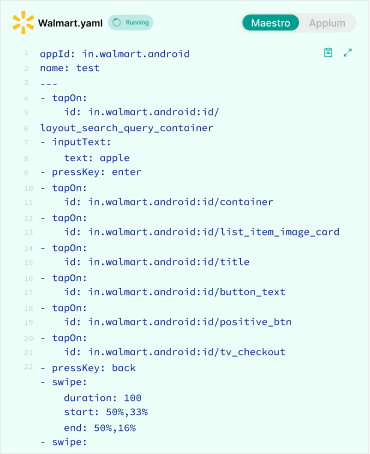

Where AI-driven testing helps

This is where AI-driven testing becomes especially valuable. The goal is to help teams see blind spots faster, using AI-powered testing and debugging.

AI can generate additional test cases from requirements, user stories, and code changes. That helps teams uncover edge cases they might not think to write manually. It can also suggest missing scenarios, prioritize high-risk areas, and adapt test generation as the application changes.

For example, AI can help a team ask better questions:

- What happens if the input is null?

- What if the API is slow?

- What if the user retries after a failed payment?

- What if a layout breaks at a specific screen width?

- What if a contract field changes unexpectedly?

It can also reduce the maintenance burden that often makes teams avoid broader testing. When tests become brittle or expensive to update, teams tend to narrow their scope. AI can help keep the suite relevant without turning test upkeep into a bottleneck.

Used well, AI helps shift testing from reactive coverage chasing to proactive risk discovery. That is a much stronger position for modern engineering teams.

Conclusion

High test coverage is useful, but it is not proof of quality. A suite can look complete and still miss real bugs if it does not validate outcomes, model risk, cover integrations, and reflect actual user behavior.

The teams that ship fewer defects are not the ones that chase the biggest coverage number. They are the ones that measure test effectiveness, focus on critical flows, and keep expanding their view of what “covered” really means.

Coverage is a signal. It is not a guarantee.

For teams looking to go beyond vanity metrics, AI-driven testing can help identify gaps, generate stronger scenarios, and keep pace with changing code. That is the kind of testing maturity that turns coverage into confidence.