Code review is breaking.

Not because engineers stopped reviewing code—but because the scale and complexity of modern changes have outpaced human bandwidth.

Large pull requests, infrastructure-as-code, multi-service dependencies, and tight release cycles have made traditional review workflows noisy, slow, and inconsistent.

AI is now stepping in to fix this. Teams are embedding LLMs directly into their code review pipelines to:

- summarize pull requests,

- detect bugs and regressions,

- flag risky changes,

- and reduce reviewer fatigue.

But once you decide to adopt AI for code review, a harder question emerges:

Which LLM should power your pipeline—OpenAI, Anthropic, or a local model?

There is no universally “best” option. The right choice depends on how your pipeline is built and what constraints you operate under.

What an AI code review pipeline actually needs from an LLM

A useful code review model does more than generate nice prose. It has to understand a diff, track dependencies across files, infer intent, detect regressions, and produce feedback that engineers trust.

In practice, the model is sitting inside a system that also includes repository context retrieval, rules, thresholds, guardrails, and human review.

The model is only one layer of the pipeline, but it strongly shapes review quality and developer adoption.

Core capabilities required for code review

| Capability | Why it matters |

|---|---|

| PR summarization | Helps reviewers understand the change quickly |

| Bug detection | Surfaces correctness issues early |

| Maintainability feedback | Improves long-term code quality |

| Test awareness | Catches missing or weak test coverage |

| Security signals | Flags risky patterns before merge |

At a minimum, the LLM must handle:

- PR summarization across multiple files and commits

- Bug and regression detection based on code changes

- Code quality feedback (readability, maintainability)

- Test awareness (missing, weak, or broken tests)

- Security signals (common vulnerabilities, unsafe patterns)

OpenAI positions GPT-4.1 as a model with strong instruction following and tool calling, plus a 1M-token context window and low latency without a reasoning step.

That profile fits review workflows that need fast, direct output inside CI and PR bots.

Anthropic’s Claude Sonnet 4.6 is built for complex coding and long-context workflows, with a 1M-token context window in beta and pricing starting at $3 per million input tokens and $15 per million output tokens.

Anthropic also reports that Sonnet 4.6 achieved 79.6% on SWE-bench Verified, a real-world software engineering benchmark.

That makes it especially relevant for large diffs, multi-file reasoning, and review flows where the model needs to keep track of more than one local change at a time.

Local models are most compelling when control matters more than turnkey convenience.

Meta’s Llama 3.1 line is designed to be fine-tuned, distilled, and deployed anywhere, and the 3.1 8B, 70B, and 405B models support text and code output with a 128k context window.

In Meta’s reported benchmarks, Llama 3.1 405B scored 89.0 on HumanEval and 88.6 on MBPP++ base version, which is strong evidence that open-weight models can be viable for code-centric workflows when the surrounding system is well engineered.

Why code review is harder than code generation

Code review is not the same as code completion.

A review assistant needs to compare old and new behavior, understand intent, infer side effects, and make judgments under uncertainty. That is why benchmark choice matters.

SWE-bench Verified is a human-validated subset of 500 real software engineering problems, so it is more relevant than generic language benchmarks when you are evaluating code-review-adjacent behavior.

Still, no single benchmark captures the full quality of a review pipeline, because review quality also depends on hallucination rate, false-positive noise, test awareness, and how often developers accept the model’s comments.

Operational requirements in CI/CD

The best pipeline is usually not the one with the most capable model on paper. It is the one that produces the right signal at the right time with the least reviewer friction.

Beyond capability, the model must behave predictably in a production pipeline:

- Low latency to avoid blocking developer workflows

- Consistent outputs (low hallucination, low noise)

- Large context handling for real-world PR sizes

- Seamless integration with GitHub, GitLab, Bitbucket

- Cost predictability as usage scales

A model that performs well in isolation can still fail if it’s too slow, too expensive, or too inconsistent in CI.

Enterprise constraints that shape model choice

For enterprise teams, you also need access control, auditability, data handling guarantees, and a deployment path that security teams will approve.

- Data privacy and code security

- Compliance requirements (SOC2, HIPAA, etc.)

- Auditability and logging

- On-prem or VPC deployment needs

These constraints often eliminate entire categories of models before evaluation even begins.

OpenAI vs Anthropic vs Local LLMs for code review

Each approach represents a different tradeoff between performance, control, and operational complexity.

1. OpenAI: the fastest path to a production-ready reviewer

OpenAI’s GPT-4.1 is designed for high-performance, real-time applications.

It supports a ~1 million token context window and is priced at approximately $2 per million input tokens and $8 per million output tokens.

On SWE-bench Verified—a benchmark based on real software engineering tasks—GPT-4.1 scores 54.6%, indicating strong general-purpose coding and reasoning ability.

Where OpenAI performs best

- Fast integration via API (minimal infra overhead)

- High-quality PR summaries and explanations

- Strong instruction-following for structured reviews

- Reliable performance across diverse codebases

Where it falls short

- External dependency (data leaves your environment)

- Usage-based costs can scale quickly

- Requires guardrails to reduce noisy or redundant comments

Best fit: Teams that want a fast, reliable default without building ML infrastructure.

2. Anthropic: optimized for long-context and complex reasoning

Anthropic’s Claude Sonnet 4.6 is built for large-context reasoning, with support for up to 1 million tokens (beta).

It is priced at $3 per million input tokens and $15 per million output tokens.

On SWE-bench Verified, it scores 79.6%, significantly higher than many alternatives—making it one of the strongest publicly reported models for real-world software tasks.

Where Anthropic performs best

- Handling large PRs and multi-file diffs

- Maintaining context across complex changes

- Producing structured, instruction-following outputs

- Deep reasoning across code + tests + configs

Where it falls short

- Higher cost, especially for large inputs

- API dependency similar to OpenAI

- Potential latency tradeoffs in CI pipelines

Best fit: Teams dealing with large, complex codebases where context retention is critical.

3. Local LLMs: control, privacy, and customization

Local models such as Llama 3.1 (8B, 70B, 405B) can be deployed within your own infrastructure.

These models support text and code tasks with up to 128k context windows.

On code benchmarks:

- HumanEval: 89.0 (Llama 3.1 405B)

- MBPP++: 88.6

These scores show that open-weight models can be competitive—especially when tuned for specific workflows.

Where local models perform best

- Full control over code and data

- Suitable for regulated or air-gapped environments

- Lower marginal cost at high scale

- Custom fine-tuning for domain-specific review

Where they fall short

- Lower out-of-the-box reliability

- Requires infra (GPUs, serving, monitoring)

- Needs continuous tuning and evaluation

Best fit: Enterprises with strict security requirements or strong ML/infra capabilities.

OpenAI vs Anthropic vs Local LLMs: Side-by-Side Comparison for Code Review Pipelines

| Criterion | OpenAI (GPT-4.1) | Anthropic (Claude Sonnet 4.6) | Local models (Llama 3.1 405B example) |

| Best fit for code review | Fast, strong default reviewer for PR summaries, diff explanations, and instruction-following in CI workflows. | Best for large, complex PRs and workflows that need strong long-context reasoning. | Best when privacy, deployment control, or air-gapped/self-hosted requirements matter most. |

| Context window | Up to 1M tokens. | 1M tokens in beta on the API. | 128k tokens. |

| Pricing | $2 / 1M input tokens and $8 / 1M output tokens for GPT-4.1. | $3 / 1M input tokens and $15 / 1M output tokens for Sonnet 4.6. | No vendor API fee; cost depends on your own infrastructure and serving stack. Llama 3.1 is designed to be deployed anywhere. |

| Public coding benchmark signal | 54.6% on SWE-bench Verified. OpenAI also says GPT-4.1 is stronger than GPT-4o on coding tasks and diff handling. | 79.6% on SWE-bench Verified. Anthropic says Sonnet 4.6 improves coding, consistency, and instruction following. | 89.0 HumanEval and 88.6 MBPP++ for Llama 3.1 405B Instruct on Meta’s published benchmark table. |

| Security / control | Hosted API model; simpler to adopt, but code leaves your environment. | Hosted API model; strong enterprise fit, but still external. | Highest control because you can fine-tune, distill, and deploy locally. |

| Main tradeoff | Best balance of capability + ease of deployment, with external dependency. | Stronger long-context reasoning, usually at a higher per-token cost. | Best control, but highest ops burden and more tuning/evaluation work. |

What the benchmarks actually tell you

The most relevant public benchmarks point in a consistent direction.

OpenAI’s GPT-4.1 is a strong general code reviewer with 54.6% on SWE-bench Verified and a 1M-token context window.

Anthropic’s Sonnet 4.6 is stronger on SWE-bench Verified at 79.6% and also offers 1M-token context in beta.

Meta’s Llama 3.1 405B shows that open-weight models can be competitive on code generation benchmarks, with 89.0 on HumanEval and 88.6 on MBPP++, while remaining deployable anywhere.

Key takeaway:

- Anthropic leads in complex reasoning

- OpenAI offers strong general performance + speed

- Local models are competitive but system-dependent

However, none of these benchmarks measure:

- false positives in reviews

- developer trust

- CI/CD integration performance

Those factors matter just as much in production.

Where each approach Wins

OpenAI tends to win when you want the fastest deployment path, strong general-purpose behavior, and low-friction API integration.

Anthropic tends to win when your PRs are large, your prompts are more structured, and long-context reasoning is central to the workflow.

Local models tend to win when privacy, residency, or infrastructure control matters enough to justify the ops burden. That is the real decision tree: convenience, reasoning depth, or control.

Where Each Option Breaks Down

OpenAI and Anthropic both introduce external dependency, usage-based cost, and policy complexity around sensitive code.

Local models avoid the API dependency, but they often require more tuning and more careful evaluation to match the reliability of hosted frontier models.

In other words, local deployment solves one class of risk while introducing another. That is why many mature teams end up with a hybrid architecture rather than a pure one.

- OpenAI / Anthropic

- Limited control over data flow

- Ongoing API costs

- Dependency on external providers

- Local LLMs

- High operational complexity

- Performance depends on tuning

- Requires evaluation pipelines

Which stack should you choose for your code review pipeline?

The right choice depends less on model quality and more on pipeline constraints and priorities.

OpenAI is the right choice:

- You want fast deployment

- You prioritize developer productivity

- You don’t have strict data constraints

Choose Anthropic if:

- You handle large, complex PRs

- Context retention is critical

- You need stronger reasoning depth

Select local LLMs when:

- You require data privacy or on-prem deployment

- You operate in regulated environments

- You can support ML infrastructure

The hybrid approach: what most teams end up building

For most teams, the highest-ROI pattern is hybrid.

Use a local model for sensitive repositories, first-pass triage, or low-risk classification. Route larger, more ambiguous, or higher-value diffs to a hosted model for deeper reasoning.

- Local model for:

- Sensitive repositories

- Initial triage and filtering

- Hosted model for:

- Deep reasoning

- Complex PR analysis

This approach:

- reduces cost,

- improves security,

- and maintains high review quality.

What matters more than the model itself

A good code review pipeline does not stop at model choice.

It needs scoped repository context, stable prompts, evaluation datasets, thresholds for when to comment versus stay silent, and a feedback loop that learns from accepted and rejected suggestions.

High-performing code review pipelines invest in:

- Prompt design and evaluation

- Repository-aware context retrieval (RAG)

- Noise reduction (avoiding unnecessary comments)

- Rule-based guardrails

- Feedback loops from developers

Even a top-tier model will fail if the surrounding system is poorly designed. The more reliable your surrounding system, the less you depend on any single model’s raw behavior.

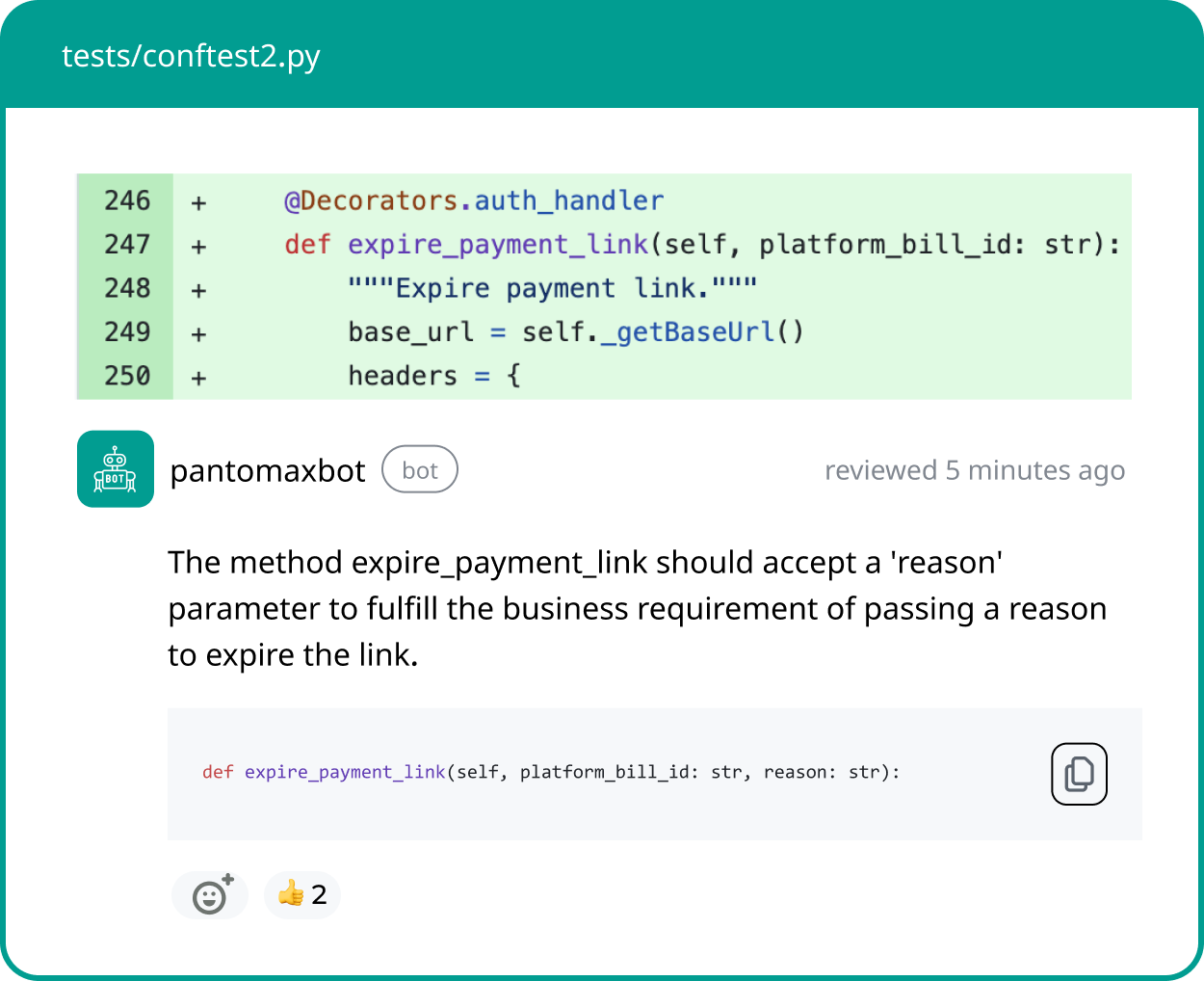

Where Panto AI fits

The real problem is not choosing a model—it’s making code review reliable at scale.

Panto AI focuses on:

- reducing noisy or irrelevant comments,

- improving signal in PR reviews,

- supporting both hosted and local model deployments,

- and unifying review across:

- application code,

- infrastructure-as-code,

- and test suites.

Instead of locking teams into a single model, the goal is to build a model-agnostic, reliable review pipeline.

Conclusion

OpenAI, Anthropic, and local LLMs each represent a different approach to AI-powered code review:

- OpenAI offers speed and simplicity

- Anthropic offers depth and context

- Local models offer control and flexibility

But the most effective pipelines are not built around a single model. They are context-aware, hybrid and continuously optimized.

As AI becomes a standard part of code review, the advantage will not come from model choice alone, but from how well the entire system is designed around it.